Learning Paths

Last Updated: April 22, 2026 at 12:30

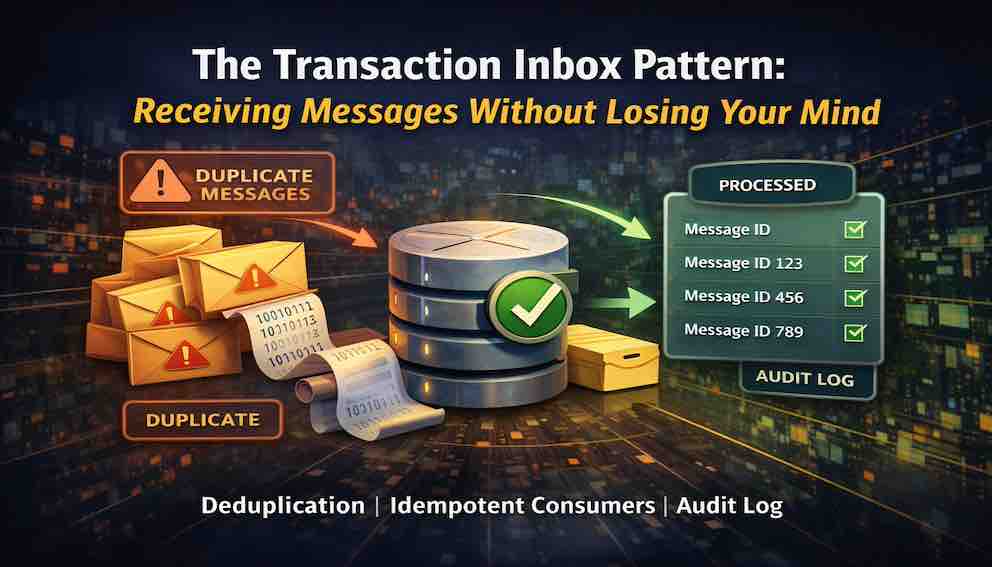

Transaction Inbox Pattern: Handle Duplicate Messages Without Data Corruption

A practical guide to idempotent message processing, retry safety, and achieving exactly-once behaviour in at-least-once delivery systems

The Transaction Inbox pattern prevents duplicate message processing by storing a processing record in the same database transaction as your business logic. This solves the critical gap where a service crash between updating data and recording the update causes duplicate execution—a problem idempotency alone cannot fix. Learn how a simple inbox table delivers reliability for message-driven systems.

Introduction

Modern applications rarely run as a single process talking directly to a single database. Instead, they communicate through message brokers—systems like Kafka, RabbitMQ, or SQS that decouple services by passing events and commands asynchronously. One service publishes a message; another consumes it and acts on it.

This model brings resilience and scalability, but it introduces a problem that is easy to overlook until it causes real damage: what happens when your service crashes halfway through processing a message?

The broker does not know whether your service finished its work before it crashed. It only knows the message was never acknowledged. So it does what it is designed to do—it delivers the message again. This is called at-least-once delivery, and it is the default guarantee of most message brokers. Your service will receive the same message twice. Not maybe. Not sometimes. It will happen.

If your message handler is not designed to deal with this, you will eventually process the same command twice. Depending on what that command does—reduce inventory, charge a card, send a notification—the consequences range from confusing to catastrophic.

The Transaction Inbox pattern is the standard solution to this problem. The core idea is to record every message your service processes in a dedicated database table, and to do so in the same database transaction as your business logic. Because both writes are atomic—either both succeed or neither does—there is no longer a window where your service can do the work but fail to record it. Duplicates are caught before any business logic runs.

One framing worth carrying with you: the inbox is not just a deduplication table. It is a complete, durable record of every external command your service has ever executed—every retry, every failure, every outcome, all in one place.

What Problem Does It Solve?

The Core Failure Scenario

Most message brokers operate on at-least-once delivery. If your service crashes before acknowledging a message, the broker will redeliver it.

Consider an inventory service that reduces stock when an order is placed. You decide to make it idempotent—each message carries a unique ID, and before processing you check whether that ID has already been handled. Sounds safe.

Here is the failure:

A message arrives. No prior record exists.

You reduce stock from 100 to 95.

Before you record that the message was processed, the service crashes.

The broker retries. The same message arrives again.

There is still no record. Stock reduces again, from 95 to 90.

The same command has been executed twice.

The issue is not logic—it is timing. There is a gap between doing the work and recording that you did the work.

This is not just a correctness bug. It is a reliability failure. The system cannot guarantee that one message produces one outcome. Under failure—crashes, retries, network interruptions—it produces inconsistent state.

In other words, the system is not fault tolerant. It behaves correctly only when nothing goes wrong.

Why Idempotency Alone Is Not Enough

An idempotency check runs before the work. The crash happens after the work, but before the system records that the work was done.

Consider a payment service. A message arrives: “charge customer £50.” The service checks whether this message ID has already been processed. It has not. So it charges the card.

Before it can record that the message was processed, the service crashes.

The broker retries. The same message arrives again. The idempotency check runs. It looks for a record and finds nothing. From its perspective, this is the first time it has seen the message.

So it charges the card again.

The same command executes twice.

The problem is not the correctness of the check. It is where the check sits relative to the failure. There is a gap between doing the work and recording that you did it, and that gap is exactly where crashes occur.

Changing retry timing does not help. Whether the retry comes in one second or one hour, the record was never written.

The only way to remove the gap is to make the business update and the processing record part of the same transaction—committed together as a single unit. That is what the inbox pattern provides.

The Table Structure

Start simple.

At minimum, the inbox table needs three things: a message_id as the primary key, a status field, and timestamps for when the record was created and last updated.

That is enough to deduplicate messages and track whether processing succeeded or failed.

From there, you extend the table as operational needs become clearer. Each additional field exists to answer a specific question about the message.

A retry_count tells you how many times the system has attempted to process the message. When this number grows, you know something is consistently failing.

An error_message records why the last attempt failed. Without it, every failure requires digging through logs.

A processed_at timestamp captures when the message was successfully handled. This lets you distinguish between “recently updated” and “actually completed.”

If your service consumes multiple queues or topics, a queue_source field identifies where the message came from. In that case, the primary key should be a combination of queue_source and message_id, since the same ID may appear in different streams.

An optional payload field stores the raw message. This is useful for replaying events or debugging edge cases, but many systems add it only when the need becomes clear.

The pattern is simple: start with the minimum, and add fields only when they answer a question you cannot otherwise answer.

Implementation

The Transaction Model

The core of the pattern is a single transaction containing both writes. You insert the inbox record, run your business logic, then update the status—all before committing. If the system crashes before the commit, nothing is saved. On retry, the message is processed once, correctly.

Insert Status First, Update After

A common mistake is inserting the inbox record only after business logic succeeds. This recreates the original problem: a crash after the business logic but before the insert leaves no record.

The correct approach is to insert a row with status = 'processing' first, then run your business logic. On success, update the status to 'completed'. On failure, update it to 'failed', store the error, and increment the retry count. This guarantees that no business logic executes without a corresponding inbox record.

Handling Retries Based on Status

When a duplicate message arrives, the existing status tells you exactly what to do. If the status is completed, the work is already done—acknowledge the message and discard it. If the status is processing, another worker may have it or a previous worker crashed mid-flight, so wait and retry later. If the status is failed, retry now and increment the retry count.

The system becomes predictable and self-describing.

Handling Concurrent Duplicates

Two workers may receive the same message simultaneously. Both check the inbox, both see nothing, and both attempt to insert. Only one succeeds. The other hits the unique constraint violation on message_id.

That violation is not an error to suppress—it is the mechanism. The database ensures only one worker proceeds. No distributed locks required, no application-level coordination. The constraint violation should be caught explicitly and handled as an "already processing" case, not logged as an unexpected error.

The unique constraint is not optional. Do not rely on application-level checks alone. Database-level enforcement is your last line of defence.

Retry Strategy and Backoff

When re-processing a failed message, do not retry immediately and unconditionally. Apply exponential backoff, where the delay roughly doubles with each retry up to a configured ceiling. Once the retry count exceeds your threshold, stop retrying and move the record to a dead-letter state or trigger an alert. Unbounded retries on a poisoned message will stall your system.

Background Recovery for Stuck Messages

If a worker crashes after inserting a processing record but before updating it to completed or failed, that message will never complete on its own. Retries will see processing and back off indefinitely.

You need a background job that runs every few minutes, finds processing records older than your maximum expected processing time, marks them as failed with an appropriate error message, and increments their retry count. Without this monitor, stuck messages stall silently. It is a required part of the pattern, not an optional enhancement.

Inbox vs. Idempotency: What Is the Difference?

These two ideas are closely related, but they solve different problems.

The inbox pattern answers one question: has this message already been processed?

It prevents the same message from being executed twice across retries. Its mechanism is the database record in the inbox table, checked before running business logic.

Idempotency answers a different question: if this operation is repeated, will it change the result?

It protects within the business logic itself—ensuring that repeating part of an operation does not cause unintended side effects.

A simple example makes the distinction clearer. Imagine a payment message: “charge customer £50.”. The inbox ensures that this message is only processed once. If a duplicate message arrives later, the system sees that it has already been handled and does nothing. Idempotency protects what happens during processing. If the service crashes after calling an external payment provider but before completing its own database update, a retry may run the same operation again. Idempotency ensures that the external provider does not charge the customer twice.

In practice, the same message identifier used to track the inbox entry can also be passed into the business logic and used as the idempotency key. This avoids creating two separate identifiers for the same message, while still keeping the responsibilities conceptually separate.

The inbox protects execution at the message level. Idempotency protects correctness within the execution itself.

The Outbox Pattern: A Related Companion

The Transaction Inbox pattern handles incoming messages. The Outbox pattern solves the symmetric problem for outgoing messages.

When your business logic needs to publish an event after updating state, you face the same atomicity problem in reverse: the database update and the message publish cannot both be done atomically in a single commit. If you update the database and then publish, a crash between the two leaves the event unpublished. If you publish first and then update, a crash leaves the event published but the state unchanged.

The solution is to write the outgoing message to an outbox table in the same transaction as your business update. A separate relay process then reads from the outbox and publishes to the broker, marking records as sent.

Inbox and Outbox together give you reliable, exactly-once-semantics communication in both directions.

The Inbox as Source of Truth

Most engineers think of business tables—orders, payments, inventory—as the source of truth. Consider flipping that model.

The inbox is the source of truth for what commands arrived. The business tables are the result of processing those commands. If your business data can be derived from the inbox by replaying it, the inbox is the foundation.

When something goes wrong, start with the inbox. What arrived? In what order? Which messages succeeded? Which failed, and why? How many retries did each take? The answers are all there, timestamped and durable.

This perspective also makes event replay practical. If you need to rebuild a projection or recover from data corruption, you can replay inbox records rather than guessing at state.

When Not to Use This Pattern

If duplicates are genuinely harmless—processing the same analytics event twice has no consequence—the added complexity is not justified. If your datastore does not support ACID transactions, this pattern does not apply without significant workarounds; many NoSQL stores fall into this category. At extreme and sustained throughput where the write amplification is a measurable bottleneck, simpler deduplication strategies may be an acceptable trade-off.

But if a duplicate means charging a customer twice, reducing inventory incorrectly, or sending the same notification twice, you want this pattern.

Testing the Pattern

Test against a real database. Mocks will not expose the concurrency and transaction behaviour you need to verify.

Test 1 — Crash after business logic, before status update. Simulate a crash after the business update but before setting the status to completed. Send the message again. Verify that business data was not updated twice and that the message is processed exactly once.

Test 2 — Concurrent duplicate messages. Send the same message from two threads simultaneously. Verify that business logic runs exactly once, that one thread receives a unique constraint violation, and that it is handled gracefully with no double execution.

Test 3 — Business logic failure. Make your business logic throw an exception. Verify that the inbox status becomes failed, that the error is stored, and that the retry count increments on the next attempt.

Test 4 — Stuck message recovery. Manually insert a record with a processing status and a creation timestamp older than your recovery threshold. Run the background monitor. Verify that it resets the record to failed and that the message is subsequently retried and completed successfully.

Test 5 — Retry cap and dead-lettering. Set the retry count above your configured maximum. Verify that the system stops retrying and routes the message to dead-letter or raises an alert, rather than retrying indefinitely.

Summary

The Transaction Inbox pattern solves a precise failure: executing the same command twice after a crash.

By recording intent and result together in a single atomic transaction, you eliminate that failure entirely. The status field handles retries and distinguishes completed, in-flight, and failed messages. The unique constraint handles concurrent duplicate delivery without locks. The background monitor recovers from workers that crashed mid-processing. The retry cap prevents poison messages from stalling the system indefinitely.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.