Learning Paths

Last Updated: April 22, 2026 at 18:30

Circuit Breaker Pattern in Microservices: How to Prevent Cascading Failures and System Outages

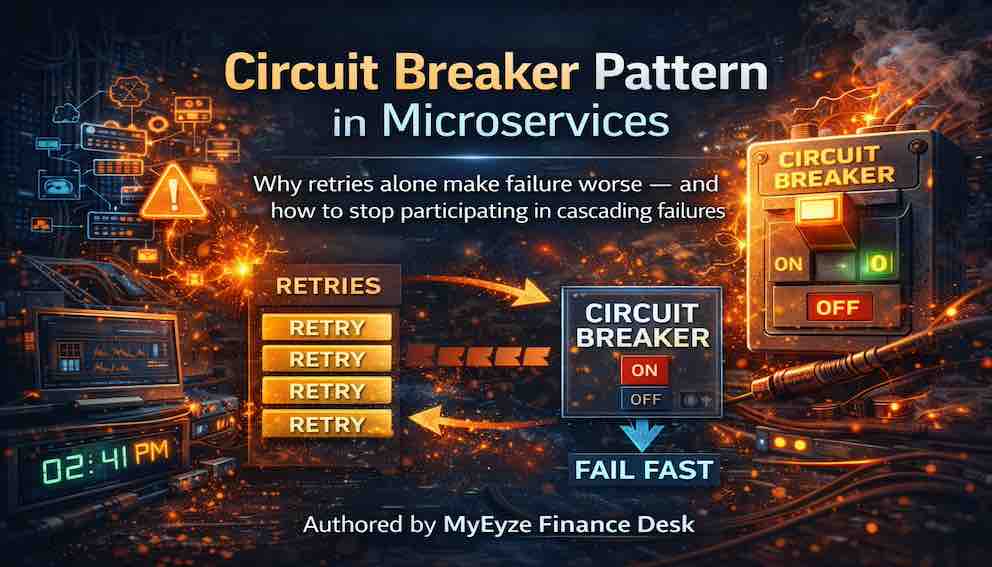

Why retries alone make failure worse — and how to stop participating in cascading failures

In a monolith, failures are immediate and contained. A function call fails, the stack unwinds, an error is returned, and the rest of the system keeps running. In microservices, that assumption no longer holds. When a service slows down or starts failing, it doesn’t fail once — it fails repeatedly, often across multiple layers, slowly consuming threads, time, and capacity until the entire system begins to degrade. The circuit breaker pattern exists to break this chain reaction. Not by making failures more graceful, but by stopping the system from continuing to call something that is already failing. By the end of this article, you’ll understand what a circuit breaker is, why retries alone often make outages worse, how it works alongside timeouts and fallbacks, and why it should be treated as a core resilience mechanism in distributed systems rather than an optional safeguard.

The problem: how one slow service collapses everything

To understand why circuit breakers exist, you first need to feel the problem they solve.

Imagine an e-commerce checkout flow. The checkout service calls the payment service. The payment service calls an external gateway. That gateway slows down — not fails, slows. Responses take five seconds instead of 200 milliseconds.

Watch what happens next.

The payment service's thread pool fills up with calls waiting on the slow gateway. The checkout service's calls to payment start timing out. Checkout service threads pile up. The order service, called by checkout, starts experiencing delays. The inventory service, called by order, starts experiencing delays.

One slow external gateway. Entire system degrading.

This is the cascading failure problem. A failure in one service does not stay in that service. It propagates. It amplifies. What begins as a local problem in one integration becomes a global outage.

The deeper issue is that services keep trying. They retry. They wait. They occupy threads while waiting. Those threads are not available to serve other requests. The system does not fail cleanly — it slowly chokes.

The circuit breaker pattern exists to interrupt this chain.

What a circuit breaker is

A circuit breaker is a mechanism that monitors failures to a downstream dependency and, once those failures cross a defined threshold, stops sending requests to it entirely. Instead of continuing to wait, retry, or time out, it fails fast — rejecting calls immediately without even attempting the dependency. After a cooldown period, it allows limited test requests to check whether the dependency has recovered.

When a dependency becomes slow, unstable, or repeatedly failing, the system stops sending it traffic. It protects the rest of the system from being dragged down by a single unhealthy component.

But in real systems, the circuit breaker is not just about stopping calls. It is also about what happens next.

When the circuit is open(ie not letting dependency calls go through), the system still has to respond. Instead of waiting for a broken dependency, it can:

- Return cached values, if previously successful responses are available and slightly stale data is acceptable

- Return default values, such as empty lists, neutral scores, or fallback configurations that keep the system usable

- Serve partial responses, where available data is returned while the failed dependency’s portion is gracefully omitted or marked unavailable

- Fail fast with a controlled error, when correctness is critical and no safe fallback exists (for example, payments or financial transactions)

Once the system moves into recovery mode, the circuit enters a half-open state, where only a small number of test requests are allowed through. If those succeed, normal traffic resumes. If they fail, the circuit opens again and the dependency remains isolated.

This combination of fast failure, controlled recovery, and fallback responses is what makes circuit breakers more than just a protective switch. They become a structured way for systems to remain responsive even when parts of the infrastructure are unstable.

The three states

Every circuit breaker operates in one of three states.

Closed is normal operation. Requests flow through to the dependency. The circuit breaker tracks failures quietly in the background. As long as failures stay below the configured threshold, the circuit remains closed and everything works as expected.

Open is failure mode. The failure threshold has been exceeded. The circuit breaker stops all requests to the dependency. No calls go through. Instead, every request fails immediately — in milliseconds — without attempting to reach the dependency at all.

This is the key insight of the pattern. The circuit breaker does not try to handle the failure. It refuses to participate in it.

Half-open is the recovery test. After a cooldown period, the circuit breaker allows a small number of requests through as a probe. If those succeed, the circuit closes and normal operation resumes. If they fail, the circuit opens again and the cooldown restarts.

Half-open state requires careful configuration. Allow too many test requests and a still-struggling service gets overwhelmed before it has a chance to recover. Allow too few and a recovered service stays unnecessarily cut off.

One behaviour worth knowing: when the circuit closes after recovery, all the traffic that was held back can rush in simultaneously and re-trip the breaker. Some implementations handle this with a gradual traffic ramp-up rather than an immediate full return to normal. If you see circuits that close and open again within seconds, this is often the cause.

Before and after: what actually changes

The difference a circuit breaker makes is best understood by walking through the same failure scenario under both conditions.

Without a circuit breaker, a dependency is slow and each call times out after three seconds. With a retry policy of three attempts, each request takes nine seconds to fully fail, and the thread handling that request is blocked for the entire duration. Under any meaningful concurrency — dozens of services calling the same failing dependency — thread pools exhaust quickly, new requests queue, latency spikes, and the system collapses.

With a circuit breaker, the first few calls fail as before. But once the failure threshold is crossed, the circuit opens. Every subsequent call fails in milliseconds. Threads are freed immediately. The rest of the system continues serving traffic normally.

The circuit breaker does not make the dependency faster or healthier. It makes your system stop waiting for a dependency that has already proven it will not respond in time.

Why retries alone make things worse

Retries are useful, but they are frequently misunderstood as a sufficient resilience strategy on their own. When a service is experiencing sustained failure, retries do not help — they amplify the problem.

Consider what retries actually do to a struggling dependency. Each retry is an additional request landing on a service that is already overwhelmed. Instead of reducing the load on a failing service, retries increase it. The service receives more traffic precisely when it is least able to handle it, which degrades it further, which causes more timeouts, which triggers more retries.

Retries also dramatically increase thread occupancy. A call that fails after one second, retried three times, holds a thread for three seconds instead of one. Under concurrency, that multiplier compounds quickly.

Retries and circuit breakers solve different problems and must be used together. Retries handle transient failures — a momentary network glitch that resolves within milliseconds. Circuit breakers handle sustained failures — a service that is genuinely down or pathologically slow. Retries without a circuit breaker amplify sustained failure. Circuit breakers without retries give up too quickly on transient ones.

Timeouts, retries, and circuit breakers as a stack

These three mechanisms are often described as competing choices. They are not. Each answers a different question, and they are designed to work together in layers.

A timeout answers: how long are you willing to wait for a single call? A retry answers: how many times are you willing to try before giving up on this request? A circuit breaker answers: when do you stop trying this dependency altogether?

A typical resilience stack works like this. Call the dependency with a timeout. If the timeout expires, retry with exponential backoff. If retries are exhausted, record a failure against the circuit breaker. Once failures exceed the configured threshold, open the circuit. While the circuit is open, fail immediately without attempting the call. After a cooldown, allow a handful of test requests through. If they succeed, close the circuit and return to normal.

Each layer has a distinct job. Do not conflate them or expect one to substitute for another.

Fallback strategies: a business decision, not a technical one

A circuit breaker without a fallback is just a faster failure. When the circuit is open, you cannot call the dependency — but your system still needs to return something to the caller. What that something is depends entirely on the nature of the dependency and what your users can reasonably accept.

The framing that matters: a fallback is not a technical choice. It is a statement about what your system considers acceptable truth when a dependency is unavailable.

A cached response returns the last known good data from a local or distributed cache. This works well for read-heavy data that changes slowly, where a slightly stale answer is acceptable.

A default value returns a neutral placeholder — an empty list, a zero balance, a generic recommendation set. This works for non-critical features where the absence of real data is tolerable.

Partial data returns what is available and clearly marks what is missing. An order page might show item details but flag shipping status as temporarily unavailable, rather than failing the entire response.

Graceful degradation skips the dependency entirely and serves the rest of the response. A missing reviews section is almost always better than an unusable product page.

A fast failure returns an error immediately and makes no attempt to guess. For payments, this is almost always the right answer. You cannot approximate money.

To choose between these, ask what happens to your users when the circuit is open and what level of inaccuracy, incompleteness, or delay they can reasonably tolerate. There is no universal answer. Every dependency deserves its own deliberate decision.

Circuit breakers and bulkheads: a brief distinction

A bulkhead limits how many concurrent requests can be in flight to a given dependency at any moment. It allocates a fixed pool of resources — threads or connections — for each downstream service. If that service slows down, only the allocated resources are affected. The rest of the system continues working.

The distinction from a circuit breaker is important. A circuit breaker is a decision about time and failure rate — when to stop calling a failing dependency. A bulkhead is a decision about concurrency and resource isolation — how many calls can be in flight simultaneously.

They address different dimensions of the same problem. The bulkhead prevents thread pool exhaustion while the circuit breaker is still accumulating evidence to decide whether to open. The circuit breaker then stops new attempts once sustained failure is confirmed. Each covers the gap the other leaves, which is why using both together produces meaningfully better outcomes than either alone.

A mental model to carry forward

In distributed systems, failure is not an event. It is a background condition.

The question is not whether a dependency will fail. It will. The question is how long your system will continue calling it after it has already demonstrated it cannot respond reliably.

The circuit breaker is the moment your system stops hoping things will improve — and starts acting accordingly. It does not make the dependency healthier. It makes your system healthier by refusing to compound the problem.

A monolith gets failure containment for free. A function fails, the stack unwinds, and the rest of the system continues. In microservices, failure containment is designed. You must explicitly decide when to stop calling a failing service. The circuit breaker is that decision.

Make it deliberately.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.