Learning Paths

Last Updated: April 28, 2026 at 14:00

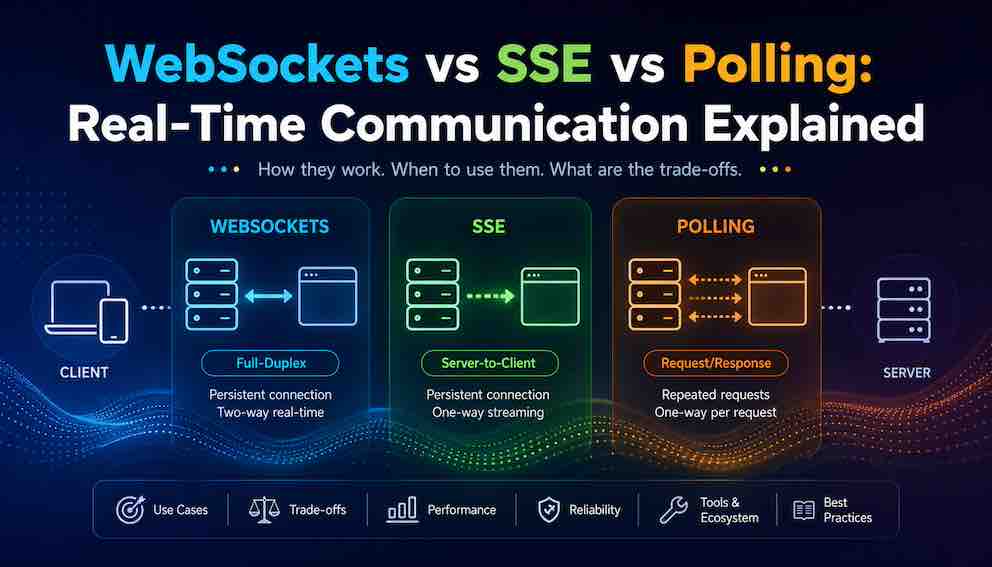

WebSockets vs SSE vs Polling: Real-Time Communication Explained

A practical guide to choosing the right real-time pattern based on failure modes, infrastructure complexity, and real-world constraints

Real-time communication is not one-size-fits-all, and choosing the wrong pattern can break your system in subtle ways. WebSockets offer full bidirectional streaming but add infrastructure complexity. SSE is simpler for server-to-client updates but has hidden limitations around connection recovery. Polling is easy to reason about but inefficient at scale. This guide walks you through the real-world decision axes that actually matter — failure modes, backpressure, observability, and cost — so you can pick the right tool for your actual use case.

The Real Problem: Why Real-Time Communication Confuses Everyone

Imagine you're building a feature that needs to show live data without the user refreshing the page. Maybe it's a chat message that should appear instantly, a stock price that ticks up every second, or a notification badge that updates when something changes. Your goal is simple: get new data from the server to the browser as soon as it happens.

You've probably heard of three main approaches: WebSockets, Server-Sent Events (SSE) , and Polling. And you've seen quick comparisons: "WebSockets are fast," "SSE is simpler," "Polling is easy." These are all true—but they only tell you what works when everything is perfect. To build a feature that stays reliable in production—through spotty Wi-Fi, sleepy laptops, and overloaded servers—you need a deeper framework for making the right choice.

Here's the core idea that holds this guide together: these three patterns each excel in different real-world situations, and knowing their strengths helps you match the right tool to your specific needs. Polling shines in simplicity and debugability. SSE delivers efficient server-to-client updates with minimal setup and automatic reconnection built into the browser. WebSockets unlock powerful two-way experiences for chat, gaming, and collaborative apps. Once you understand how they behave under real conditions — not just in demos — you can confidently pick the one that makes your feature robust, reliable, and right for your users.

A Quick Mental Model

Before we get into code or configuration, let's build an intuitive picture of each approach. You're the client (a browser), and you want updates from a server.

Polling is the oldest and simplest idea. Your client asks the server, "Got anything new?" on a fixed schedule — every 5 seconds, every 10 seconds, whatever you choose. The server answers either with new data or an empty response. Think of it like a child in the back seat asking, "Are we there yet?" over and over. It works. It's easy to understand. But most of those questions get a "no," wasting effort. Long polling is a clever variation: the server holds the request open until it actually has data, then responds. This cuts down on empty responses, but you're still opening a new HTTP request every time.

SSE (Server-Sent Events) works like a one-way radio station. The browser opens a single connection to the server and says, "Send me updates whenever you have them." The server then pushes messages as they happen, but the client cannot send data back over that same connection. This is perfect for situations where only the server needs to talk — live sports scores, news tickers, or system notifications.

WebSockets are the most powerful but also the most complex. They create a full, two-way tunnel between the client and server. Once that tunnel is open, either side can send messages freely at any time. Think of a phone call instead of a radio broadcast. This is what you need for chat apps, multiplayer games, or collaborative document editing where both sides need to talk simultaneously.

The real decision lives in the details below, especially what happens when things go wrong.

How Each Technology Actually Works (Under the Hood)

Now let's get technical, but in plain terms.

Polling is just regular HTTP, the same protocol your browser uses to load any website. Every few seconds, the browser makes a normal GET request to a URL like /new-messages. The server responds with new data or an empty body, and the connection closes. No special protocols, no persistent connections. Because it's plain HTTP, every CDN, proxy, and corporate firewall already understands it without any extra configuration.

SSE also uses HTTP, but in a persistent way. The browser makes a request to a special endpoint like /events. But instead of the server responding once and closing the connection, the server keeps the connection open forever. Whenever new data arrives, the server sends a line of text starting with data: followed by your message. The browser's built-in EventSource API handles the connection, automatically reconnects if it drops, and parses incoming messages. The critical limitation: this is one-directional — server to client only. To send data to the server, you need a separate normal HTTP request.

WebSockets start their life as HTTP. The browser sends a special Upgrade request to the server saying, "I'd like to switch to WebSocket protocol." If the server agrees, they both "upgrade" the connection. From that point forward, it's no longer HTTP. It becomes a persistent, full-duplex binary connection where both sides can send messages freely and independently. Messages can be text or binary, and there's no HTTP header overhead per message. But because it's no longer HTTP, it doesn't go through standard load balancer logs, proxy rules, or CDN caches.

Axis One: Failure Modes and Connection Recovery

This is the most important section. Connections will drop. Networks are unreliable. Mobile phones go through tunnels. Laptops go to sleep. Users walk into elevators. How each pattern behaves when this happens determines whether your system works in production.

Polling is the most resilient. A request fails, the client waits a few seconds, and tries again. No state is lost because each request is stateless. You missed one polling cycle and catch up on the next one.

SSE has a deceptive resilience. The EventSource API automatically attempts to reconnect, which feels safe — but messages sent while the client was disconnected are silently lost unless you build a replay mechanism. The server has no idea what the client missed. If you need every message to arrive, SSE alone is not enough. You need message IDs (the id: field in the SSE spec) and server-side replay logic.

WebSockets offer no automatic reconnection whatsoever. When a WebSocket drops, it just drops. Your application code must detect the disconnection, implement exponential backoff for retries, and track which messages were sent versus acknowledged. The protocol gives you no help here.

This single axis — what happens when the connection breaks — should drive most of your decision.

Axis Two: Backpressure and Load Behavior

Backpressure is what happens when the sender produces data faster than the receiver can consume it.

Polling has no backpressure problem by design. The client asks for data when it is ready. The server responds. The client controls the rate entirely.

SSE has no built-in flow control. The server can push messages as fast as it wants. If it sends ten events per second but the client can only process five, the browser's internal event queue grows. With sustained overload, the tab may become unresponsive. There is no native mechanism for the client to signal "slow down."

WebSockets don’t provide built-in backpressure mechanisms, but you can implement basic flow control on the client side using the bufferedAmount property. This allows you to avoid overwhelming the network buffer, though it does not provide true end-to-end backpressure without additional application-level protocols.

Axis Three: Infrastructure and Deployment Complexity

Polling works everywhere without any special configuration. No sticky sessions, no load balancer changes, no proxy timeout tuning. Deploy anywhere and it just works.

SSE works almost everywhere, with one common trap. SSE uses a persistent HTTP connection, and many load balancers and reverse proxies have a default idle timeout of 60 seconds. If your SSE connection stays open longer than that without traffic, the proxy silently terminates it. You need to configure long-lived connection support and disable response buffering at the proxy level. Most CDNs(e.g. cloudflare) also struggle with SSE because they are architected for short-lived request-response cycles.

WebSockets require the most infrastructure work. Your load balancer must support the WebSocket upgrade handshake. With multiple server instances, a client that reconnects must return to the same instance — unless you externalize all connection state to a shared store and use a pub-sub layer to route messages. Many cloud providers have specific WebSocket pricing tiers and connection limits. Corporate firewalls and transparent proxies frequently block or mangle WebSocket upgrades entirely.

Teams regularly choose WebSockets for their elegance and then spend weeks untangling proxy and load balancer configuration. Build with the infrastructure you actually have, not the infrastructure you assume.

Axis Four: Client Environment Constraints

Mobile battery life is a genuine concern. Polling wakes the device radio on every interval, which drains battery significantly faster than a persistent connection. SSE and WebSockets use a single persistent connection, which is much more power-efficient. For mobile clients, this alone can be a decisive factor.

Browser support is largely a non-issue today. SSE via EventSource is not supported in Internet Explorer at all. WebSockets work in IE10+ with some edge cases. Polling works in every browser ever shipped. If you still need IE11 support — for enterprise internal tools, for example — this constraint matters.

Corporate and restricted networks often block or downgrade WebSocket connections. A network proxy sees the protocol switch as a security event and terminates it. SSE over HTTPS usually passes through because it looks like a normal long-lived HTTPS response. Polling always passes through. If you are building anything deployed inside enterprise environments, test on those networks before shipping.

Axis Five: Connection Limits and Server Capacity

Every open connection consumes resources. This is invisible with polling (connections open and close quickly), but becomes a hard constraint with SSE and WebSockets.

The browser limit. A single browser tab can only open a limited number of persistent connections to the same domain — typically six. This is why opening multiple SSE streams or WebSockets from one page can fail silently. If you need many real-time data sources, design your backend to aggregate them into a single connection.

The server limit. Each open WebSocket or SSE connection consumes memory and a file descriptor on your server. A typical server might handle 3,000–10,000 concurrent connections before struggling. That sounds like a lot — until you have 100,000 users. Beyond that, you need horizontal scaling, connection pooling, or specialized servers designed for high concurrency.

The load balancer limit. Your load balancer also has connection limits. Many standard load balancers cap at 10,000–50,000 concurrent connections. Cloud providers often offer "WebSocket-friendly" load balancers at higher price tiers. Check your limits before you hit them.

What this means for your choice:

- Polling sidesteps this entirely. Connections are short-lived, so you can serve millions of clients with standard infrastructure.

- SSE and WebSockets give you real-time updates but limit your maximum concurrent users unless you scale aggressively.

- If you expect massive scale (hundreds of thousands of concurrent users) , polling or a hybrid approach might actually be cheaper and simpler than fighting connection limits.

A real example: A startup builds a live dashboard with WebSockets. In testing with 100 users, everything works perfectly. At launch, 50,000 users connect. The load balancer hits its connection limit. New users can't connect at all. The fix requires migrating to a different load balancer and re-architecting their backend for horizontal scaling — weeks of work they didn't plan for.

Know your limits before you commit to a pattern.

Axis Six: Security and Authentication

Every real-time connection needs to answer one question: Is this user allowed to receive this data? How you handle authentication depends on which pattern you choose.

Polling is the simplest. Each polling request is a normal HTTP request — just like loading a webpage or calling an API. You can use cookies, bearer tokens in Authorization headers, or whatever your existing login system already uses. Each request is checked independently. Nothing new to learn.

SSE has a catch that surprises most beginners. The browser's built-in tool for SSE is called EventSource. It's easy to use — just new EventSource('/updates') — but it has a limitation: EventSource does not let you add custom headers like Authorization: Bearer your-token. This is a design choice in the browser API, and you can't work around it with normal code.

So how do you authenticate with SSE? You have three options:

- Use cookies. If your user already has a session cookie (the same cookie that keeps them logged in on your site), that cookie is automatically sent with the SSE connection. This works great — but only if you're using cookie-based authentication.

- Put the token in the URL. Example: /updates?token=abc123. This works, but it's less secure. The token ends up in server access logs, browser history, and the Referer header sent to other sites. Only use this for non-sensitive data or internal tools.

- Skip EventSource and build your own SSE with fetch(). Modern browsers support reading data as a stream using fetch() and ReadableStream. This gives you full control over headers, but requires significantly more code. Most teams don't need this complexity.

WebSockets give you the most flexibility. When the WebSocket connection first starts, it begins as an HTTP request (the "upgrade" request). During that HTTP phase, you can add any headers you want. You can also authenticate by putting a token in the URL, using a cookie, or sending a special "auth" message immediately after the connection opens.

Persistent connections like SSE and WebSockets do not automatically re-check authentication after the initial connection is established. If token expiry or session invalidation is required, it must be enforced at the application layer through periodic validation, heartbeat checks, or connection renewal strategies.

Axis Seven: Observability and Debuggability

When something goes wrong at 3 AM, can you figure out why?

Polling is the easiest to debug by a wide margin. Every request appears in your access logs with a URL, status code, response time, and payload. You can reproduce any request with curl. Standard APM and tracing tools understand HTTP natively.

SSE is harder to observe. The connection shows as a single long-running request in your logs — you see it open, and then nothing for hours. Individual messages are invisible unless you add explicit logging at the application layer.

WebSockets are the hardest. The upgraded connection is no longer HTTP, so it does not appear in standard access logs at all. You need purpose-built tooling to monitor WebSocket message flows. Debugging typically means verbose application-level logging and browser developer tools, neither of which exists in production.

There is a reason many teams start features with polling even when they know it is suboptimal. Debugging polling problems at 3 AM is straightforward. The same problem on WebSockets can take hours.

Axis Eight: Data Delivery Semantics

Does your application need every message, in order, exactly once?

Polling makes no guarantees. If you poll every five seconds and three updates happen in that window, you only see the latest state. That is fine for a stock ticker. It is a serious problem for a message feed.

SSE gives you ordered delivery within a single connection. If the server sends event A then event B, you receive them in that order. But if the connection drops, any events sent during the gap are lost unless you implement replay using the Last-Event-ID header. Without that, SSE is at-most-once delivery.

WebSockets also give you ordered delivery within a single connection, but with no built-in delivery guarantees or acknowledgment system. A message that enters the TCP send buffer will be lost if the connection drops before it flushes. Reliable delivery requires application-level acknowledgments and retry logic — nothing the protocol handles for you.

For systems where missing a single event is a serious problem, the right answer is usually a message queue (Kafka, SQS, Redis Streams) combined with your real-time transport — not relying on the transport layer alone.

Axis Nine: Cost at Scale

Three distinct cost dimensions come into play.

Compute cost. Polling generates many short requests. Ten thousand clients polling every five seconds means 120,000 server requests per minute. That is real, measurable load. SSE and WebSockets create far fewer requests but hold connections open, consuming memory and file descriptors for each client.

Network cost. HTTP polling carries request and response headers on every cycle — often several hundred bytes of overhead for small payloads. SSE and WebSockets have negligible per-message overhead after the initial connection.

Infrastructure cost. Polling is stateless, which means horizontal scaling is trivial. Persistent connections require session affinity or external state — stickier, more expensive infrastructure. Cloud load balancers often price WebSocket connections differently than standard HTTP, sometimes significantly.

For a chat application with ten thousand concurrent users, a well-tuned WebSocket or SSE server can handle the load on a handful of mid-sized instances. The same application on naive short-interval polling would require substantially more capacity. For low-frequency updates with simple infrastructure requirements, polling may actually be cheaper end-to-end when you factor out the ops complexity of managing persistent connections.

Anti-Patterns Worth Naming

WebSockets for simple one-way notifications. You only need occasional server-to-client updates. You choose WebSockets because they feel powerful. The result is weeks of infrastructure debugging for a feature SSE would have handled in an afternoon. The cost of sophistication here is real.

Polling for a chat application. You choose polling because it is familiar. The result is high server load, missed messages between poll intervals, and battery drain on mobile. Users notice. This is the wrong tool for the problem.

SSE without a replay mechanism for critical updates. You choose SSE over WebSockets to avoid complexity. The result is silent data loss when connections drop. Users miss events and never know it. They blame your product, not their network.

No network fallback strategy. You ship WebSockets and assume they work everywhere. Users behind corporate firewalls cannot use your feature. There are no error messages — just silence. Testing on a restrictive network before shipping is a must.

Decision Framework: Five Constraints, Then Choose

Evaluate your situation against five questions. Note which pattern each answer points to, and see what emerges.

Constraint 1: Do you need two-way communication? If yes, WebSockets is your only native browser option. Polling and SSE are one-directional.

Constraint 2: Can you lose occasional messages? If no — if every event must arrive — you need either WebSockets with application-level acknowledgment and replay, or polling backed by a persistent message store. SSE without a replay mechanism will drop messages on reconnection.

Constraint 3: What are your infrastructure limits? If you cannot configure load balancer timeouts or cannot ensure session affinity, polling is the safest choice. If you have control over your infrastructure, SSE is viable. WebSockets require the most configuration.

Constraint 4: What is your team's operational experience? If no one on your team has debugged a persistent connection in production, start with polling or SSE. Add WebSockets when you have a specific need and the team is ready to operate them.

Constraint 5: Who are your users, and where are they? Mobile users need persistent connections to protect battery life. Corporate users behind firewalls may need polling or SSE as fallbacks. Consumer web users on modern networks can usually handle any of the three.

Quick Reference

Use WebSockets when:

- You need bidirectional communication (chat, collaborative editing, multiplayer)

- You have control over your infrastructure

- Your team has experience with persistent connections

- You will implement application-level acknowledgments and replay for reliability

- Battery efficiency on mobile matters

Use SSE when:

- You only need server-to-client updates (notifications, scores, dashboards, log tailing)

- Infrastructure complexity is a real concern

- You can configure load balancer timeouts or tolerate periodic reconnections

- You implement message ID replay for important events

- You prefer staying in the HTTP ecosystem

Use Polling when:

- Update frequency is low (every ten seconds or more is comfortable)

- You have no control over your infrastructure (serverless functions, restricted environments)

- You need maximum compatibility across networks and browsers

- The team has no experience with persistent connections

- You are building a simple internal tool with modest user counts

Use a hybrid approach when:

- Users may be behind restrictive networks (fall back from WebSockets to long polling)

- Different features have genuinely different needs

- You are building a library or SDK that must work everywhere

Your Final Takeaway

The best real-time solution is not the fastest one. It is the one whose failure modes you understand and can tolerate.

Polling fails by being inefficient — but that failure is completely transparent and easy to debug. SSE fails by losing messages during disconnections unless you build replay. WebSockets fail by dropping connections silently unless you build reconnection and acknowledgment logic from scratch.

Understand your infrastructure before committing. Understand your team's operational capabilities. Understand what happens to your users in a tunnel, behind a corporate proxy, or on a slow mobile connection.

Then make your choice. Start simple. Mix patterns where it makes sense. And know that the right answer for your system today may be different from the right answer in a year.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.