Learning Paths

Last Updated: April 17, 2026 at 17:30

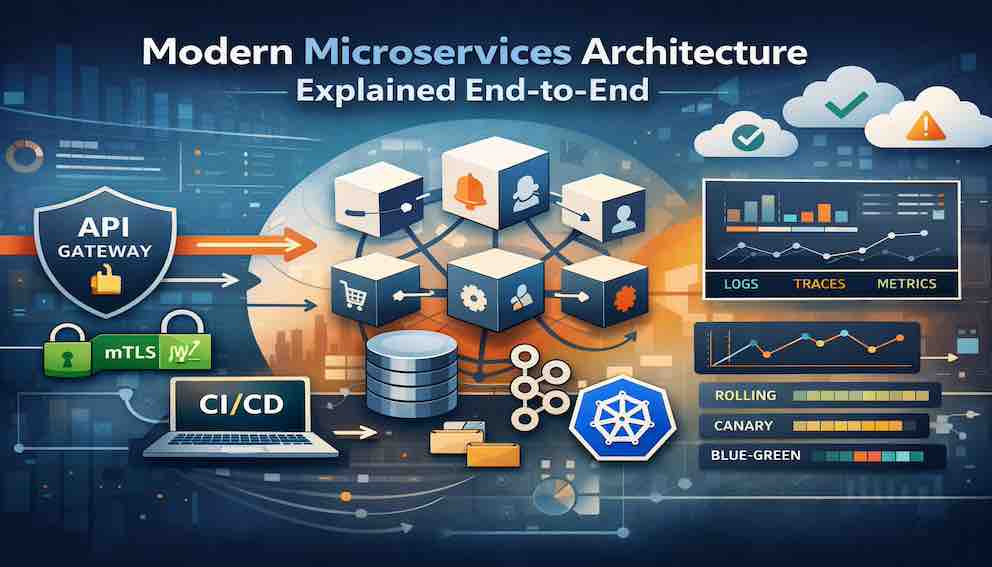

Modern Microservices Architecture Explained End-to-End

How Production Systems Work: DevOps, Observability, and Failure Handling

This article walks through a modern microservices system end-to-end, following a single request as it moves through edge routing, identity, service execution, reliability mechanisms, data propagation, and observability. It shows how real production systems are not static architectures but continuous flows shaped by timeouts, retries, events, and partial failures. The key insight is that microservices only make sense when you design for failure as the default state, not the exception. In practice, system reliability comes less from individual patterns and more from how well they are composed into a single coherent runtime.

Many of the building blocks of modern microservices — circuit breakers, CQRS, Kubernetes, CI/CD pipelines, and distributed tracing — are now standard parts of everyday engineering practice. Each of these patterns is well understood in isolation and widely applied in real production systems. The interesting challenge emerges when they operate together inside a single evolving system, where trade-offs interact and decisions at one layer ripple through others. What becomes valuable at that point is a clear mental model for how these pieces fit together across a working production system.

This article builds that model by exploring the major layers of a modern distributed architecture, showing not just what each part does, but why it exists and how it behaves under real production constraints. We will move through edge routing, identity, service design, reliability mechanisms, data and event flow, observability, and operations. At each stage, the focus is on how the components connect, how they influence one another, and what role they play in the system as a whole.

Think of it less as an architecture reference and more as a guided tour through a living system. The patterns described reflect a common modern microservices setup, though real-world implementations often vary in tools, topology, and emphasis.

The Core Thesis: A System Is a Flow, Not a Diagram

Before we begin the tour, it helps to establish a foundational idea that reframes how we think about architecture.

Architecture diagrams are not wrong — they are incomplete by design. They represent systems as static structures: boxes, arrows, services, and databases with clean boundaries. This abstraction is useful for communication and planning, but it does not capture how production systems actually behave.

In reality, a system is a continuous flow of requests, events, failures, retries, deployments, and observability signals. It is dynamic rather than static, constantly shifting under load and change. It behaves in ways that are not fully visible in any single diagram, because those diagrams are snapshots of structure, not motion.

This distinction changes how we reason about systems.

When we treat a system as a static structure, we ask: “What does this service do?” When we treat it as a flow, we ask: “What happens to a request when this service is slow, unavailable, or partially failing? What happens when messages are duplicated or delayed?” These second-order questions are where production systems are actually designed and understood.

So throughout this article, the guiding question is simple: what is happening to a request right now, at this point in the system?

The Six-Layer Mental Model

Before diving into the system itself, it helps to establish a simple way of looking at it.

A modern microservices architecture is not a single structure but a combination of concerns that operate simultaneously — how requests enter, how trust is established, where logic executes, how failures are handled, where data lives, and how the system explains itself. These concerns overlap in practice, but separating them conceptually makes it easier to reason about what is happening at any point in a request’s journey.

In this article, we will use a six-layer mental model as a way to organise that complexity. It is not a strict architectural blueprint, but a lens for understanding how different parts of the system contribute to the whole.

Everything we discuss maps into these six layers:

1. Edge Layer — Where requests enter the system. Load balancing, TLS termination, API gateway, rate limiting, routing.

2. Identity Layer — Where trust is established. Authentication, JWT propagation, service-to-service identity, zero trust boundaries.

3. Service Layer — Where business logic lives. Microservice responsibilities, communication patterns, contract design.

4. Reliability Layer — Where systems refuse to die. Timeouts, retries, circuit breakers, bulkheads, graceful degradation.

5. Data and Event Layer — Where state lives and propagates. Databases, event streams, eventual consistency, the async backbone.

6. Observability and Operations Layer — Where the system explains itself. Logs, metrics, traces, incident response, delivery pipelines.

These layers are not independent. Observability cuts across all of them. Reliability thinking applies from the edge to the database. The data layer shapes what every other layer can do. But keeping these six layers in mind gives you a map for the territory ahead.

1. The Edge Layer — Where Every Request Begins

A request does not arrive at your service. It arrives at your system's boundary, which is quite a different thing.

In modern systems, that boundary is typically composed of several layers working in concert: a load balancer, an API gateway, and often a content delivery network or edge network in front of both. Understanding what each layer does — and more importantly, what each layer decides — is essential to understanding everything downstream.

The Load Balancer

The load balancer's job sounds simple: distribute traffic. In practice, it is the first layer of resilience in your system.

A well-configured load balancer performs health checks on your services and removes unhealthy instances from rotation automatically. It handles TLS termination, which means your internal services can communicate without managing certificates individually. It handles connection draining when instances are shutting down — ensuring in-flight requests complete before the instance disappears.

The decision to terminate TLS at the load balancer rather than at individual services is not obvious. It adds a layer of trust: traffic between the load balancer and your services is decrypted. But it simplifies certificate management dramatically and reduces CPU overhead on compute-intensive services. In high-security environments, this is complemented by mutual TLS inside the cluster — we will return to that in the identity layer.

The API Gateway

The API gateway is where the real work of edge handling happens, and it is one of the most commonly underutilised components in microservices architectures.

At its core, the gateway is responsible for several things that you absolutely do not want individual services handling independently. Authentication initiation happens here. Rate limiting happens here. Request routing happens here. These three alone justify its existence.

Beyond these basics, modern API gateways handle request transformation (normalising different API versions into a consistent internal format), response aggregation (combining responses from multiple services into a single client response — the Backend for Frontend pattern), and circuit breaking at the edge.

One failure mode most teams discover too late: the gateway as a single point of failure. In production systems, the gateway itself must be redundant, deployed across multiple availability zones. The gateway protects your services from failure, but it needs its own redundancy story.

The Edge Is a Control Plane

Here is the key insight: the edge layer is not just entry. It is the system's first and most cost-effective control plane.

Decisions made at the edge — about authentication, rate limiting, routing, and degradation — protect every service behind it. They are far cheaper to make here, at the boundary, than to distribute across dozens of services. Every capability you add at the edge is a capability that every service behind it inherits for free.

2. Identity, Trust, and Zero Trust Architecture

With the request inside the gate, the first question every service will ask is: who sent this? And the answer to that question is surprisingly complex.

Authentication Versus Authorisation

These two terms are so often conflated that it is worth being precise.

Authentication is the act of verifying identity: this request was sent by user ABC, or by the payments service, or by an admin console. Authorisation is the act of checking permissions: user ABC is allowed to view this resource, but not delete it. They are different operations, and conflating them leads to architectures that are either too permissive or impossibly complex.

Modern systems handle authentication at the edge using standards like OAuth 2.0 and OpenID Connect (OIDC). The flow works roughly like this: a user authenticates with an identity provider (your own auth service, Auth0, Cognito, or similar), receives a signed JWT token, and attaches that token to every subsequent request. The API gateway validates the token's signature using the identity provider's public key — without making a network call — and passes the validated identity downstream as a trusted header.

Services downstream never need to validate the token themselves. They trust the gateway's assertion. This is a deliberate architectural decision: centralise authentication once rather than distributing the responsibility across every service.

JWT Propagation

The JWT that the gateway validates typically contains claims: structured data about the authenticated identity — user ID, roles, permissions. These claims are forwarded to services as request headers. Services use them for authorisation decisions without additional network calls, which is important in a distributed system where latency accumulates quickly.

The trade-off is that JWTs are stateless and cannot be invalidated without a blocklist. If a user's permissions change or a token is compromised, the token remains technically valid until it expires. Production systems handle this with short expiry windows (typically 15 minutes to an hour for access tokens), long-lived refresh tokens that can be revoked, and in high-security contexts, a token blocklist that services can query cheaply.

Service-to-Service Identity with mTLS

User authentication covers requests coming from outside the system. But in a microservices architecture, most requests are service-to-service: the orders service calling the inventory service, the notification service calling the user service.

One approach modern systems use is mutual TLS (mTLS). In mTLS, both parties in a connection present certificates, proving their identity to each other. A compromised service or a rogue actor inside the cluster cannot simply impersonate another service. Managing certificates at this scale manually would be impractical — service meshes like Istio or Linkerd handle this automatically, issuing short-lived certificates to every service instance and rotating them without operator intervention.

An alternative approach, often used for its simplicity, is OAuth 2.0 client credentials flow (or similar token-based service authentication). In this model, a service authenticates with an identity provider and receives a signed JWT representing its identity and permissions. This token is then attached to outbound requests, allowing downstream services to verify both the caller’s identity and its authorised actions without relying on network-level trust.

The trade-off between these approaches is largely one of control and complexity. mTLS provides strong, infrastructure-level identity with automatic encryption and is well suited to zero trust environments. Token-based approaches are easier to adopt and integrate naturally with existing authentication systems, but rely on correct token handling and validation at the application layer. Many production systems use a combination of both: mTLS for transport-level trust and JWTs for conveying identity and authorisation context.

Zero Trust Architecture

The mental model unifying all of this is zero trust: assume no request, regardless of where it originates, should be trusted by default.

This sounds paranoid. It is not. In a microservices system, the network interior is often less controlled than the perimeter. Shared Kubernetes clusters, compromised third-party dependencies, lateral movement from a breached service — these are real threat vectors that treat interior network traffic as trusted. Zero trust says: verify every request, from every source, every time. Authenticate it. Authorise it. Log it.

3. The Microservices Service Layer — Where Business Logic Lives

We now have an authenticated, routed request arriving at the service responsible for handling it. This is where most architecture discussions begin, as it is where business logic lives. By this stage, however, upstream layers have already influenced how the service will behave.

The Principle of Single Responsibility

A microservice should own one bounded context. The inventory service owns inventory. It does not share a database with the orders service. It does not embed pricing logic because that is convenient. It does not call the user service to enrich its responses, because that creates coupling that will eventually become a liability.

The payoff is independent deployability: you can change and deploy the inventory service without touching, testing, or coordinating with anything else. In large organisations, this is transformative. Teams move at the speed of their service, not at the speed of the slowest thing they are coupled to.

Communication Patterns: Synchronous vs Asynchronous

Services communicate in two fundamentally different ways, and choosing between them is one of the most consequential design decisions in a distributed system.

Synchronous communication — REST or gRPC — means the calling service sends a request and waits for a response. Simple, predictable, easy to debug. Also fragile: if the called service is slow or unavailable, the caller is affected directly. In a chain of synchronous calls — service A calls B calls C calls D — a slowdown anywhere propagates upward, and latency compounds.

Asynchronous communication — via a message queue or event stream — means the calling service publishes a message and immediately continues, without waiting. The consumer processes the message independently, potentially much later. This decouples the services in time: a temporary outage in the consumer does not affect the producer. The trade-off is complexity: debugging is harder, ordering is difficult to guarantee, and you must reason about eventual consistency.

The practical rule: use synchronous communication when you need a real-time response and both services are expected to be available. Use asynchronous when the action can be deferred, when the services have different availability characteristics, or when you want to guarantee delivery across failures.

Contracts Over Implementation

One of the most valuable habits in microservices development is treating service interfaces as contracts, not implementation details.

The orders service does not need to know how the inventory service works internally. It needs to know what it can send and what it will receive. This distinction matters when services evolve. If the inventory service changes its internal data model but honours its contract — accepting the same requests, returning the same response shape — dependent services are unaffected.

Consumer-driven contract testing, which we will cover in the testing section, is the mechanism that enforces this discipline.

4. The Reliability Layer — Distributed System Failure Handling

Here is the uncomfortable truth about distributed systems: failure is not an exception. It is the default.

Networks partition. Services crash. Dependencies slow down. Disks fill up. A service that only works when everything works is not production-ready.

The reliability layer is not a set of features you add when you have time. It is the skeleton of your system's survivability.

Timeouts

Every network call must have a timeout. This sounds obvious, but it is violated constantly.

A service that calls a dependency without a timeout will hang indefinitely if the dependency hangs. A few concurrent hangs will exhaust your thread pool or connection pool, and suddenly your service is unavailable — not because of anything it did wrong, but because it was too polite to give up.

Good timeout values require thought. Too aggressive, and you fail legitimate requests. Too generous, and you allow failures to cascade. A useful principle: your timeout should be tighter than your caller's timeout, creating a timeout budget that propagates correctly up the call chain. If the gateway has a 5-second timeout, the service handling its request should time out its own dependencies at 3 seconds. The chain stays sane.

Retries and Exponential Backoff

Some failures are transient: a network blip, a brief service restart, a momentary resource exhaustion. For these, retrying is correct.

But naive retries — immediate, unlimited, from every client — make things dramatically worse. If a service is struggling under load and every client retries immediately, the service now receives multiplied traffic precisely when it is least able to handle it.

Exponential backoff solves this: each retry waits longer than the last, with random jitter to prevent multiple clients from retrying synchronously. A typical policy waits 100ms after the first failure, 200ms after the second, 400ms after the third, up to some maximum. Combined with a retry limit, this gives transient failures time to resolve without turning a blip into a cascade.

The crucial caveat: only retry idempotent operations. A GET request can always be retried safely. A POST that creates a payment cannot — unless idempotency keys are in place. An idempotency key is a unique identifier attached to a request that lets the server detect and safely ignore duplicate requests. Without it, a retried payment might charge a customer twice.

Circuit Breakers

Even with timeouts and retries, a persistently failing dependency is a problem. Every call you make ties up resources and adds latency. The circuit breaker pattern addresses this by tracking the failure rate of a dependency and, when failures exceed a threshold, opening the circuit — subsequent calls fail immediately without attempting to reach the dependency.

After a configurable window, the circuit enters a half-open state, allowing a small number of trial requests. If those succeed, the circuit closes. If they fail, it opens again.

This is valuable for two reasons. First, fast failure rather than slow failure — a call that returns in 1ms is far less damaging than one that hangs for 30 seconds. Second, it gives the failing dependency breathing room to recover, rather than hammering it with traffic while it struggles.

Bulkheads

In shipbuilding, a bulkhead limits flooding: if one compartment floods, the others remain intact. In distributed systems, the same principle applies to resource pools.

Without bulkheads, a misbehaving dependency can exhaust your entire thread pool or connection pool, affecting every other dependency your service has. With bulkheads, each dependency gets its own isolated resource pool. A failing dependency exhausts its own pool. Your connections to other dependencies remain unaffected.

Graceful Degradation

The combined intent of all these patterns is graceful degradation: when parts of your system are unavailable, serve a diminished but functional response rather than failing completely.

The canonical example: if a product recommendation service is unavailable, the right response is not to fail the entire product page. It is to render the page without recommendations. The user experiences reduced service, not an error. The purchase flow continues.

This is a design choice made deliberately. Services need to decide: what is my minimum viable response when my dependencies are unavailable? What can I cache? What can I skip? What can I approximate? Thinking through these questions before failures happen is the difference between a resilient system and a fragile one.

5. Observability — How a Distributed System Explains Itself

Observability is not a layer in the traditional sense. It is a cross-cutting concern that spans every other layer in this system.

In many architecture guides, it appears toward the end, often after core components have been introduced. In practice, however, observability is what makes those components understandable and operable in a real environment. The patterns in the reliability layer — timeouts, circuit breakers, retries — become meaningful when you can see when they activate, how often they occur, and what impact they have on the system. Similarly, deployment strategies are only effective when you can observe how a new version behaves under real traffic.

Observability acts as the system’s feedback loop. It allows engineers to understand behaviour, diagnose issues, and make informed decisions as the system evolves.

A system that is well-instrumented can be operated with confidence, because its behaviour is visible and its signals are interpretable.

The Three Pillars

Logs are the oldest and most universally understood form of observability. In microservices, they must be structured — emitted as machine-readable JSON rather than free-form text — and centralised. A log that lives only on the machine that generated it is useless when that machine is gone.

The discipline of structured logging is about making logs computable. Instead of "User ABC completed checkout with order xyz," emit a JSON object with fields user_id, order_id, event, timestamp, duration. Now you can query across all checkout completions, aggregate by duration, filter by error type, and correlate with other events. Free-form text is for humans. Structured logs are for systems.

Metrics are aggregated measurements of system behaviour over time: request rates, error rates, latency percentiles, queue depths, resource utilisation. Unlike logs, metrics are designed for alerting and dashboarding — they tell you whether the system is healthy right now.

The RED method (Rate, Errors, Duration) is a useful template for service-level metrics. The USE method (Utilisation, Saturation, Errors) covers resource-level metrics. Together they give you a complete picture of a service's health without drowning in noise.

SLIs (Service Level Indicators) and SLOs (Service Level Objectives) formalise this further: you define which indicators matter — p99 latency of the checkout endpoint, error rate of the payment service — and set objectives. SLOs create a shared language between engineering and the business about what "the service is healthy" actually means.

Distributed traces are the tool that ties everything together in a microservices context. A trace represents a single request's journey through the system, composed of spans — one per service or operation. Each span records its start time, duration, outcome, and parent span ID. Together, they form a complete causal map of the request.

A user reports that checkout was slow. Without traces, answering this requires log archaeology across six services and significant luck. With traces, you pull up the trace and see exactly which span was slow, which service owned it, and what the context was. Thirty seconds instead of thirty minutes.

Correlation IDs

Distributed tracing is the gold standard, but even a simpler mechanism — correlation IDs — provides enormous value at low cost.

A correlation ID is a unique identifier generated at the edge for every request. Every service that handles the request logs the correlation ID alongside every log entry. When something goes wrong, you filter your centralised logs by correlation ID and see every event, across every service, in the order they occurred.

This sounds trivially simple. It is.

Alert on Symptoms, Not Causes

One more principle worth calling out: alert on symptoms, not causes.

Do not alert when CPU is at 80%. Alert when error rate exceeds your SLO threshold. The CPU spike is a cause. The error rate is the symptom — the thing users actually experience. Let your dashboards tell you about causes after you know there is a symptom. Alert fatigue — too many alerts, too many false positives — means engineers learn to ignore alerts. The critical alert that signals a real outage is lost in the noise.

Good alerting is difficult to get right and constantly requires tuning.

6. The Data Layer and Event-Driven Architecture in Microservices

Data is where microservices architecture becomes most explicit about trade-offs, particularly around ownership, consistency, and communication.

Database Per Service

A foundational principle is that each service owns its data exclusively. Other services do not access its database directly; they interact through APIs or events. This separation allows services to evolve independently, avoiding tight coupling at the data layer.

In practice, this often means duplicating data across services and communicating through APIs or events rather than direct database queries. This duplication is intentional: it allows services to avoid synchronous dependencies, reduce latency, and continue operating even when other services are unavailable. By maintaining local copies of the data they need, services can respond quickly and remain resilient, while still staying consistent over time through event-driven updates. At the same time, each service retains the flexibility to model and store data in a way that best fits its domain.

The Consistency Spectrum

Distributed systems require explicit choices about consistency.

Within a service, strong consistency typically applies — writes are immediately visible to subsequent reads. Across services, systems rely on eventual consistency, where updates propagate asynchronously and different parts of the system may briefly diverge.

This is a natural property of distributed systems. The key is understanding which operations require immediate consistency and which can tolerate delay. Financial transactions demand strict consistency, while user activity feeds or product catalogues can update asynchronously without impacting user experience.

The Event-Driven Backbone

Event-driven communication provides a way to connect services without tight coupling.

Systems such as Apache Kafka, RabbitMQ, or Amazon SQS allow services to publish events that other services consume independently. This enables decoupling, durability, and load smoothing — producers emit events without needing to know who consumes them, and consumers process them at their own pace.

In systems like Kafka, the event log becomes a durable record of system activity. Consumers track their position in the log and can replay events to rebuild state, support new features, or debug historical behaviour.

Domain Events as System Truth

Events are most powerful when treated as representations of what has happened, rather than simple notifications.

A “user-created” event, for example, becomes an immutable record that other services can rely on. Each service builds its own local view of the world by subscribing to and processing these events. Over time, system state emerges from the accumulation of these event streams.

Data Duplication as a Design Choice

In microservices, data duplication is often a deliberate design decision.

For example, instead of calling the user service on every request, the orders service may store a local copy of customer data and keep it updated through events. This reduces latency and avoids synchronous dependencies, while still allowing data to converge over time.

In many cases, duplication also improves correctness — preserving historical context (such as a customer name at the time of an order) rather than overwriting it with the latest value.

7. The Runtime Layer — Where Code Actually Runs in Production

All of the services, patterns, and data stores we have described need to run somewhere. The runtime layer executes, scales, and connects them.

Containers and Kubernetes

Containers have become the standard packaging format for microservices. A container bundles a service and all its dependencies into a portable, immutable artefact. The same image that runs in development runs in staging and production. Environment differences are eliminated.

Kubernetes has become the dominant container orchestration platform. Its job is to take a desired state — "I want six replicas of the orders service running, each with 512MB of memory and 0.5 CPU cores, distributed across availability zones" — and make it real. It handles scheduling, restarts failed containers, rolls out updates, and scales based on load.

Understanding Kubernetes requires accepting that it introduces significant operational complexity. Networking, storage, RBAC, secrets management, ingress controllers — the surface area is substantial. This complexity is justified for large systems with high deployment frequency. For smaller systems, managed platforms (AWS ECS, Azure AKS, DigitalOcean Kubernetes) provide container orchestration with far lower operational overhead.

Scaling and Statelessness

Horizontal scaling — adding more instances of a service — is the preferred scaling strategy in microservices. The prerequisite is statelessness: your service instances must be interchangeable. No instance should hold state that another instance would need.

Sessions, request context, temporary data — all of this must live outside the instance, in a database or cache. An instance that can be killed at any moment without affecting others is a healthy instance. One that cannot be killed without data loss is a liability.

Kubernetes Horizontal Pod Autoscaling handles the scaling mechanics automatically: increasing replica counts when utilisation exceeds a threshold, decreasing when load drops.

Service Meshes

A service mesh — Istio, Linkerd, or Consul Connect — sits alongside your services and handles cross-cutting concerns transparently: mTLS for service authentication, distributed trace injection, retries, timeouts, circuit breaking, and traffic management.

This is a significant promise. In practice, service meshes also add latency, operational complexity, and a steep learning curve. They suit large organisations with many services and dedicated platform teams. For smaller organisations, implementing these concerns at the application level — in a shared library — is often more practical.

8. The Delivery System — How Change Enters Production Safely

A microservices architecture is only valuable if you can actually deploy changes to it. The delivery system — CI/CD pipelines, deployment strategies, safety mechanisms — is how teams turn code into running software at high frequency and low risk.

CI/CD Pipelines

Continuous integration means every code change is automatically built, tested, and validated before being merged. The pipeline runs unit tests, integration tests, static analysis, security scanning, and builds a deployment artefact. Developers get feedback within minutes rather than discovering failures at end of sprint.

Continuous deployment extends this: every change that passes the pipeline is automatically deployed to production. This sounds frightening until you understand that it is actually safer than infrequent manual deployments. Smaller changes are easier to reason about, easier to test, and easier to roll back. The risk per deployment is lower, and problems are caught faster.

Deployment Strategies

Once a new version of a service is ready, the question becomes: how do you introduce it into a running system without disrupting users?

Modern systems use deployment strategies that allow new versions to be released gradually, validated under real traffic, and rolled back safely if needed.

Rolling deployments are the most common approach. Instances are updated incrementally — one or a few at a time — while the rest continue serving traffic. This keeps the system available throughout the deployment. If an issue appears, you can pause or reverse the rollout before all instances are affected. Rolling deployments work well when changes are low risk and backward compatible.

Blue-green deployments take a different approach by maintaining two identical environments. Traffic runs on the current version (blue) while the new version is deployed to the idle environment (green). Once validated, traffic is switched entirely to green. If issues arise, traffic can be switched back immediately. This provides fast rollback and strong isolation between versions.

Canary deployments introduce the new version to a small percentage of users first — one percent, five percent — while the rest continue on the existing version. The system is monitored for errors, latency changes, and business impact. If the new version behaves as expected, traffic is gradually increased. This allows real-world validation while limiting the impact of potential issues.

Feature Flags

Feature flags decouple deployment from release. Code is deployed to production with a new feature disabled. The feature is enabled for specific users or traffic percentages through a configuration system. When the feature is ready, it is enabled globally — no deployment required.

This enables continuous deployment of incomplete features, controlled rollout strategies, and instant kill switches when something is wrong.

9. The Testing Pyramid — Manufacturing Confidence

In a monolith, you can test the whole system end-to-end in a single test run. In microservices, testing is a distributed coordination problem. The testing pyramid gives you a framework for managing it.

Unit tests verify individual functions and classes in isolation, with all dependencies mocked. They are fast, numerous, and cheap to maintain. They verify that your code does what you think it does, but say nothing about whether services work together correctly.

Integration tests verify that a service works correctly with its actual dependencies — its database, its cache, its message queue. Slower than unit tests, but they catch a class of bugs that unit tests cannot: schema mismatches, query errors, configuration issues.

Contract tests: Consumer-driven contract tests work like this: each service that consumes another service's API publishes a contract describing what it sends and what it expects to receive. The provider service runs against all its consumers' contracts as part of its CI pipeline. If the provider changes its API in a way that breaks any consumer, the test fails — before deployment, without spinning up any service instances.

Contract tests provide the fast feedback of unit tests while catching the integration issues that only surface when services talk to each other. They are the practical alternative to expensive end-to-end tests in large service graphs.

End-to-end tests exercise complete user flows across multiple services. Most realistic, most expensive to maintain, most prone to flakiness. In a large service graph, a test that spans eight services has eight times the infrastructure failure modes. The practical approach: a small number of end-to-end tests covering the most critical paths, not full end-to-end coverage of everything.

The insight: microservices move testing complexity from code to coordination. Individual services can be simply tested. The hard problem is verifying that they work correctly together — and contract tests, done well, solve most of that problem.

10. The Operations Layer — Where Reality Hits

Everything described so far is the system as designed. Operations is the system as experienced — by the engineers who maintain it at 3am when something is broken.

Incident Response

Modern engineering organisations classify incidents by severity — SEV1 (total outage or critical data loss), SEV2 (major degradation affecting many users), and lower levels for partial or minor issues. Each severity level has defined response procedures: who is paged, what the expected response time is, who declares resolution.

On-call rotation distributes the burden of incident response across a team. Healthy on-call practices include reasonable escalation paths, runbooks for common scenarios so investigators are not starting from zero, and clear handoff procedures.

Runbooks and Postmortems

A runbook connects symptoms to causes: if this alert fires, check these dashboards, look for these patterns, try these remediation steps. Runbooks are written in quiet moments before incidents and used under pressure during them. With a well-prepared runbook, common issues can be resolved quickly and consistently, without starting from first principles each time.

Every significant incident deserves a postmortem: a written analysis of what happened, why it happened, and what systemic improvements will prevent recurrence. The practice of blameless postmortems attributes failures to systems and processes, not individuals. A deployment that caused an outage is not evidence that the engineer who deployed it was careless. It is evidence that the pipeline did not catch the problem, or the rollback procedure was too slow, or the monitoring did not alert in time.

Chaos Engineering

Chaos engineering is the practice of intentionally injecting failures into a system to verify that it handles them correctly. The philosophy: if your failure-handling mechanisms are only tested when real failures occur, you discover gaps in production incidents. If you proactively test them, you discover gaps in controlled experiments.

Chaos engineering is not for immature systems. It requires strong observability, mature incident response processes, and a culture that treats failure as a learning opportunity. But it is what separates genuinely resilient systems from ones that merely hope to be resilient.

11. Security in Production Microservices

Security in a microservices system is not a feature added at the end. It is a property that emerges from decisions made across every layer — how services authenticate, how data is protected, and how access is controlled and observed.

Secrets Management

Every service depends on sensitive credentials: database connections, API keys, TLS certificates, and encryption keys. Managing these securely requires moving them out of code and configuration, and into dedicated systems. Tools such as HashiCorp Vault, AWS Secrets Manager, or GCP Secret Manager provide centralised storage with access control, audit logging, and automated rotation. Services retrieve secrets at runtime or use short-lived, dynamically generated credentials that expire automatically. This reduces long-term exposure and limits the impact of a compromised credential.

Encryption and Secure Communication

Data is protected both at rest and in transit. Storage systems — databases, object stores, backups — are encrypted to prevent unauthorised access. Network communication is secured using HTTPS externally and TLS or mTLS internally, ensuring that data cannot be intercepted or modified in transit.

Together, these mechanisms establish a baseline of confidentiality and integrity across the system.

Access Control and Auditability

Security is not only about preventing access, but also about controlling and understanding it.

Access control ensures that services and users operate with only the permissions they need — a principle often referred to as least privilege. Audit logging complements this by providing a reliable record of significant actions: who accessed which data, what changes were made, and when they occurred.

These audit trails are distinct from application logs. They are designed to support investigation, compliance, and accountability, and are most effective when built into the system from the start.

12. The Organisational Layer — The Hidden Architecture

Here is a rule that software architects learn slowly and then cannot un-see: your system architecture will eventually mirror your team structure.

This is formalised as Conway's Law: organisations design systems that reflect their communication structure. This is not a metaphor. It describes how software actually gets built. If two teams own separate services but share a database, the teams will eventually be coupled by that database. If a single team owns too many services, those services develop implicit dependencies because it is convenient. If there is no team that owns the platform, the platform will be inconsistent and underinvested.

Conway's Law is not a warning. It is a design principle. Architect your teams for the system you want, and the system will follow.

Reducing Coordination Overhead

One of the core promises of microservices is that teams can work independently. The goal of organisational design is not to eliminate coordination — it is to make coordination the exception rather than the rule.

Strong service contracts, published API documentation, event schemas treated as public interfaces, and platform teams that handle shared concerns — these reduce the surface area where teams need to actively coordinate. When coordination is required, it should be lightweight: a quick conversation, a PR comment, a Slack thread. Bureaucratic coordination processes kill deployment velocity far more effectively than technical problems.

Final Synthesis — What a Modern Distributed System Actually Is

A modern microservices system is simultaneously six things.

A flow of requests. Every user action triggers a journey through multiple services, databases, caches, and queues. Understanding this flow — tracing it end-to-end, visualising the latency budget, identifying the critical path — is the core of system understanding.

A network of failure-handling mechanisms. Timeouts, retries, circuit breakers, bulkheads, fallbacks — these are not features. They are the system's immune system. Without them, any failure cascades. With them, failures are contained.

A data consistency compromise machine. Strong consistency across all services is not achievable at acceptable cost. Every microservices architect makes explicit choices about which operations need strong consistency and which can tolerate eventual consistency. These choices are design decisions, not failures.

A deployment automation pipeline. A microservices system with manual, infrequent deployments is a dangerous thing. The velocity of change is what keeps technical debt from accumulating. CI/CD, canary deployments, and feature flags make frequent, safe deployments possible.

A human organisational structure reflected in code. The service boundaries in your system will eventually mirror the team boundaries in your organisation. Conway's Law is not a warning — it is a design principle. Design your teams for the system architecture you want, and the system will evolve to match.

A continuous learning machine. Postmortems, chaos engineering, SLO monitoring, alert tuning — these are the feedback loops that make the system smarter over time. Systems that do not learn from failures are doomed to repeat them.

The developers who thrive in microservices environments are not those who have memorised the most patterns. They are those who understand how the patterns connect — who can trace a request end-to-end, diagnose a failure mid-flight, design for failure modes they have not seen yet, and build the observability to understand what they are operating.

Microservices are a commitment to building systems that can evolve, survive failure, and scale — both technically and organisationally — at a pace that monolithic systems cannot match.

Understanding the whole system is not an advanced skill. It is the foundational one.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.