Learning Paths

Last Updated: April 2, 2026 at 13:30

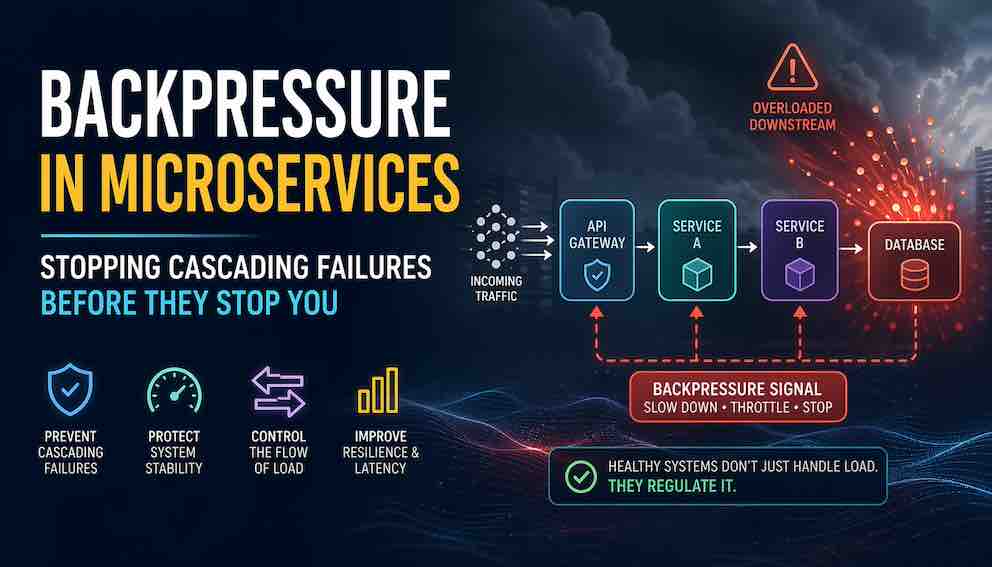

Backpressure in Microservices Explained: Preventing Cascading Failures, Overload, and Retry Storms

How backpressure keeps microservices stable under sudden load spikes by controlling traffic, protecting dependencies, and preventing cascading system failures

Microservices rarely fail because of a single broken component—they fail because overload spreads faster than the system can contain it. Backpressure is the missing control mechanism that allows downstream services to signal when they are struggling, forcing upstream systems to slow down before collapse begins. Instead of letting retries, queues, and threads silently amplify traffic, it turns overload into a managed, visible response that protects system stability. In this article, we explore how backpressure works across real architectures and why it is one of the most important safeguards against cascading failures in distributed systems.

Introduction: Why Microservices Break Under Load

It is a Tuesday afternoon. The website traffic is normal. Then a marketing campaign goes viral. Your order-service is now receiving five times its normal request load.

No big deal, right? That is what auto-scaling is for.

Here is what actually happens. The order-service starts to slow down. Its database connections max out. Response times creep from 50ms to 2 seconds. The payment-service, which calls order-service, starts waiting. Its thread pool fills up with pending requests. Soon, payment-service cannot accept new traffic either. The slowdown propagates up the chain like a wave. Within minutes, your API gateway is timing out, users are seeing 503 errors, and your pager is on fire.

This is a cascading failure. And it is not caused by a bug. It is caused by the absence of one critical control mechanism.

Backpressure is a mechanism where a system signals upstream components to slow down or stop sending work when it is overloaded.

In this article, we explore backpressure as a practical mechanism for preventing microservices from overwhelming one another under heavy load.

What Backpressure Is

Start with a simple mental model.

Imagine a water pipe. If you turn on the tap fully, water flows. But if you kink the pipe downstream, water builds up pressure behind the kink. Eventually the tap itself slows or stops. The downstream condition has created upstream pressure.

Backpressure is exactly that, but for data.

When a downstream service — say, a database — is overwhelmed, it sends a signal back upstream: slow down, I cannot take any more.

The critical thing to understand is this: Backpressure is not about slowing things down because you want to. It is about protecting system stability by controlling the intake rate based on real downstream capacity.

Think of a congested highway. When lanes are full, on-ramp signals turn red and force cars to wait. That hurts locally — you sit at the ramp — but it saves the entire highway from gridlock. Backpressure works the same way. Some requests wait or are rejected at the edge, so the core of your system stays alive.

Where Backpressure Lives in Microservices Architecture

Backpressure is not a single thing you install. It lives in specific places across your architecture, and it is implemented differently depending on where it sits in the system.

API gateways (Kong, NGINX, Envoy, AWS API Gateway) control the rate of requests entering the entire system. They can enforce limits on requests per second and concurrent connections, and they can reduce allowances dynamically when backend services report degraded health.

Message queues (Kafka, RabbitMQ, SQS) control queue depth and consumer lag. A producer can be slowed or blocked when a queue exceeds a threshold, preventing runaway accumulation.

Service-to-service HTTP calls use timeouts, connection limits, and HTTP status codes — notably 429 Too Many Requests and 503 Service Unavailable — to signal that a service cannot accept more work.

gRPC and HTTP/2 implement backpressure at the protocol level through flow control. The server communicates a window size to the client via a SETTINGS frame; when that window is full, the client stops sending. This happens below your application code, but it only protects you if timeouts and connection limits are configured correctly.

Database connection pools limit how many queries run simultaneously. When the pool is exhausted, new queries either wait or fail fast, which is far better than allowing hundreds of threads to pile up waiting for a connection that will never come.

Stream processing pipelines (Flink, Kafka Streams, Project Reactor) use consumer-driven, pull-based demand. The consumer requests exactly N items. The producer sends exactly N. The consumer sets the pace.

One pattern worth calling out clearly: a queue without backpressure is not really a buffer. It is a delayed failure mechanism. That is why engineers often end up searching for “backpressure in Kafka” or “backpressure in gRPC” after realising their queues were not absorbing load, but simply concealing overload until it surfaced later and more painfully.

What Problems Backpressure Solves

Let me be specific and name these clearly, so you have a working taxonomy.

Cascading failures. Service A slows, Service B waits, Service B's threads exhaust, Service B fails, Service C now waits for Service B. You have seen this. It is the most common and destructive failure mode in distributed systems.

Retry amplification storms. Your service times out, so the caller retries. Ten other services do the same. One failed request becomes 100. Backpressure tells the caller: do not even retry yet — I am full.

Thread pool exhaustion. Every incoming request occupies a thread. If the downstream is slow, threads pile up waiting for responses. Eventually the service stops accepting anything, including health checks. Your orchestrator marks it dead and restarts it, which makes everything worse. Backpressure rejects requests early and keeps threads available for critical work.

Queue bloat and unbounded buffering. Unbounded queues feel safe until your process runs out of memory and crashes. Bounded queues are honest: when they are full, they say so. That honesty is a feature.

Tail latency collapse. At 50% load, your P99 latency might be 100ms. At 80% load, it jumps to 3 seconds. Backpressure activates before you reach 80%, keeping latency predictable for the requests you do accept.

Resource starvation. CPU, memory, file handles, and network sockets are finite. Backpressure limits intake so these resources remain available for health checks and administrative operations — the calls that keep your orchestration layer from making bad decisions about your service.

Why Backpressure Matters: What It Changes About How You Fail

If you take one thing from this section, take this: backpressure transforms how your system fails.

Without backpressure, failure is cascading and complete. One service degrades, everything degrades.

With backpressure, failure is graceful and contained. The slow component slows its callers, but the rest of the system keeps running. Users may see a "try again later" for one specific feature. They do not see a blank page for everything.

The system qualities backpressure directly improves are resilience (the system survives load spikes instead of collapsing), fault isolation (a slow dependency affects only the requests that depend on it, not your entire thread pool), predictable tail latency (by shedding excess load early, you protect the P95 and P99 of requests you accept), and elasticity (you do not need to over-provision capacity for worst-case scenarios because the safety valve exists).

Backpressure, Rate Limiting, and Load Shedding: What Is the Difference

These three mechanisms are often confused. They are related but solve different problems.

Rate limiting enforces a fixed ceiling — 1,000 requests per second regardless of downstream health. The limit does not change based on what your database is doing right now. It is static and appropriate when you know the upper capacity of a dependency and want to enforce it unconditionally.

Load shedding drops requests when the system is overwhelmed, without coordinating with upstream. You do not ask callers to slow down — you simply reject. It is blunt and fast. When speed of rejection matters more than coordination, load shedding is the right tool.

Backpressure is dynamic and cooperative. The downstream signals the upstream: I am struggling, ease off. The limit adjusts in real time based on health. It is most powerful in multi-tier service chains where coordination is possible and cascading failure is the risk.

Here is the nuance most articles miss: backpressure is not always superior to load shedding. Load shedding is a sledgehammer. Backpressure is a dimmer switch. In ultra-low-latency systems — high-frequency trading, real-time control systems — even the coordination overhead of backpressure may be too slow. Load shedding is often the right call there.

When to Use Backpressure (and When Not To)

Use backpressure when:

- Traffic is bursty or unpredictable. This is the most important qualifier. Backpressure is most valuable when load is unpredictable, not just high. Flash sales, webhook spikes, and viral events are all good candidates.

- Your downstream is weaker than your upstream. A fast API calling a slow database is the canonical case.

- You have streaming or async pipelines where consumer lag is observable and actionable.

- You have multi-tier service chains. Without backpressure, any link in a chain of A → B → C → D can silently take down everything downstream of it.

Reconsider or avoid when:

- Traffic is simple and low-volume. If you receive stable or low volume, backpressure adds significant complexity for no benefit.

- You have ultra-low-latency requirements where coordination cost is unacceptable. Load shedding is a better fit.

- Load shedding is genuinely sufficient. Sometimes randomly dropping 5% of requests and letting clients retry is the right answer. That is not backpressure, but it might be exactly what your failure mode requires.

Implementation Strategies

Here are five practical strategies from simplest to most sophisticated.

Bounded queues. A queue with a maximum size of 1,000 items. When it is full, the producer receives an error. Smaller queues reject more aggressively but fail fast. Larger queues absorb more bursts but can mask overload for longer. Tune for your workload.

Rate limiting and throttling. Token bucket or leaky bucket algorithms. Requests beyond the allowed rate receive a 429 response. This is passive backpressure — it does not react to downstream health in real time — but it is simple and effective at the API gateway layer.

Reactive, pull-based backpressure. The consumer tells the producer: give me data when I ask, not when you feel like it. Libraries like Project Reactor, RxJava, and Akka Streams implement this. The consumer requests N items; the producer sends exactly N. The consumer controls the pace end to end. This is the most architecturally clean form of backpressure.

Circuit breaker with upstream notification. A circuit breaker stops outbound calls when failure rate exceeds a threshold. But a circuit breaker alone does not tell the upstream why it stopped. A better pattern: when the circuit opens, the upstream service receives a signal — a 503 with a Retry-After header, or an explicit message on a control channel — instructing it to reduce its own rate. This is backpressure with memory.

Protocol-level flow control. In gRPC, which runs over HTTP/2, the server sends a SETTINGS frame that specifies its current window size. When the window fills, the client stops sending without any application code required. TCP itself works similarly. This layer gives you basic protection for free, but it only holds if your connection limits and timeouts are configured deliberately.

Observability-Driven Backpressure

Backpressure does not have to be reactive — triggered only after something has already begun to fail. It can be predictive, driven by metrics that warn you before the system degrades.

The signals to watch are queue depth crossing a threshold (for example, above 80% capacity), consumer group lag in Kafka exceeding a target (for example, more than 10,000 messages behind), P99 latency rising beyond an acceptable ceiling, and error rate climbing above a baseline.

When these metrics cross defined thresholds, your system can automatically reduce intake without waiting for an actual failure. A Kafka consumer group lag exceeding 10,000 messages can trigger a signal to the producer API to slow its publish rate. When lag drops below 1,000, the rate increases again. No human intervention required.

This is called adaptive backpressure or metrics-based throttling. Connecting your observability stack directly to your throttling decisions is where production-grade systems are heading. It also means that backpressure events themselves must be observable. You need to track how often requests are being rejected, why they are being rejected, and whether upstream callers are actually respecting the signal. If you cannot see these things, you cannot tune them.

Implementation Nuances: Where Things Actually Go Wrong

The basics are straightforward. The following details are where production incidents happen.

Retry amplification. If your service signals backpressure with a 503 or 429, the caller must not retry immediately. Immediate retries transform backpressure into a denial-of-service attack against yourself. Callers must use exponential backoff with jitter, and ideally a circuit breaker that respects the signal rather than fighting it.

Timeout discipline. Backpressure only works if timeouts are set. If your HTTP client waits indefinitely, it never receives the slow-down signal — it just accumulates. Set deliberate timeouts, in the synchronous service calls.

Queue size versus latency. A larger queue means fewer rejections, but every request in that queue is waiting. As the queue fills, average latency rises for all requests. Measure this trade-off for your specific workload rather than guessing at a "safe" queue size.

Fairness across tenants. If one noisy tenant fills your shared queue, every other tenant suffers. Most teams discover this during an incident, not before. Per-tenant queues or per-tenant rate limits are the solution; neither is free to implement.

Feedback loop latency. If you check queue depth every ten seconds, your backpressure is nearly useless for fast-moving spikes. Aim for sub-second feedback, ideally per-request.

Propagation delay in deep chains. Propagation delay in deep service chains becomes especially problematic in push-based systems. In a five-hop chain, a backpressure signal originating at Service E has to travel upstream through multiple layers before it reaches Service A. By the time Service A reacts, Services B, C, and D may already be under strain or partially degraded.

Pull-based reactive systems avoid this entire class of delay. In this model, the consumer controls the flow of data instead of the producer pushing work as fast as possible. Each consumer explicitly requests how much data it can handle, turning execution into a demand-driven process where work is only produced when there is confirmed capacity to process it. Because the pace is set at the consumer level from the start, there is no delayed upstream signal that needs to propagate through the system.

Common Mistakes

These appear in production systems with regularity.

Treating backpressure as just rate limiting. Rate limiting is static. Backpressure is dynamic — it responds to what downstream is actually experiencing right now. If your database doubles in throughput after an upgrade, backpressure should ease automatically. A static rate limit will not.

Retrying immediately on backpressure signals. A client receives a 429 and retries fifty times without delay. That is not resilience. That is a self-inflicted amplification attack.

Using unbounded queues. "But our queue never fills." It never fills because the process runs out of memory first. Bounded queues surface real capacity limits. Unbounded queues hide them until the crash.

No end-to-end coordination. Service A implements perfect backpressure. Service B ignores the 429 it receives from service A and keeps hammering. Backpressure requires cooperation across the call chain, not just within a single service.

Measuring average latency instead of tail latency. Measuring average latency instead of tail latency. Your average response time might look healthy, but that can hide serious problems affecting a smaller portion of requests. For example, most requests may complete in 100ms, while the slowest 1% (the P99) take 10 seconds or more. Backpressure should react to these worst-case delays, not just the overall average. The average smooths everything out under load, but tail latency exposes the real user experience problems that actually break systems.

No observability for the backpressure mechanism itself. You cannot debug what you cannot see. Instrument rejection rates, rejection reasons, queue depths at the moment of rejection, and upstream response to signals.

Backpressure as a System Philosophy

Backpressure is a design philosophy for building systems that survive load they were not designed for.

Most teams do not need advanced mechanisms like backpressure in their day-to-day systems. Simplicity is the norm, and designs are usually built around the happy path: we will handle all requests, all the time. That works well—until load exposes the limits. Every system has a ceiling, and the important question is how it behaves when it gets there.Teams that understand backpressure operate on three principles:

Protect the system before protecting throughput. A slow system that stays alive is worth more than a fast system that collapses.

Stability over completeness. It is acceptable to reject some requests if that keeps the rest of the system healthy and predictable.

Controlled failure is better than uncontrolled success collapse. Failing early and clearly gives upstream services the chance to adapt. Failing silently and slowly takes everything down with you.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.