Learning Paths

Last Updated: April 9, 2026 at 10:30

Common Cryptography Mistakes Developers Make (And How to Avoid Them)

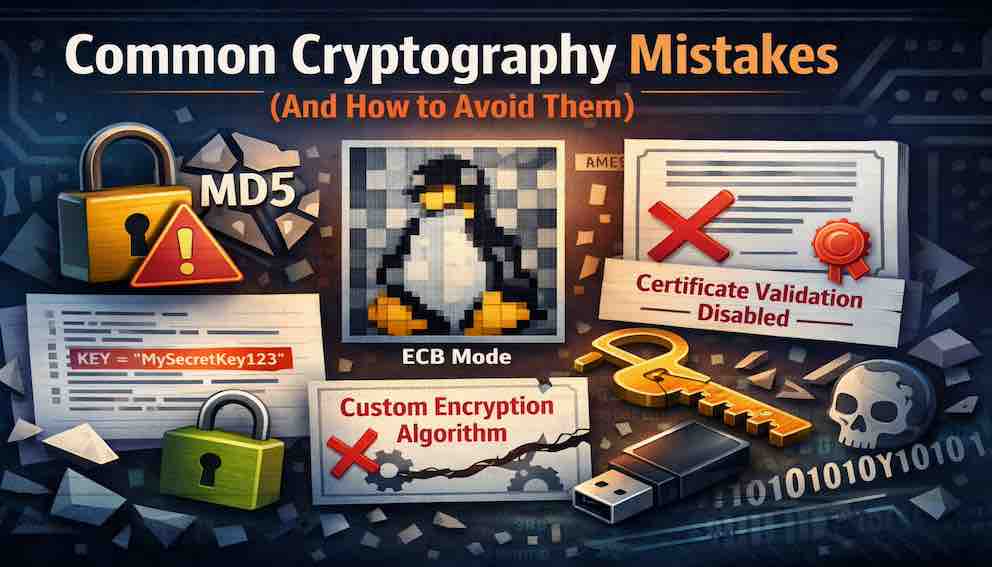

A practical checklist of the pitfalls that have breached countless systems — weak algorithms, hardcoded keys, disabled verification, and how to avoid each one

Cryptography is the hardest part of security to get right. A single mistake — using ECB mode, disabling certificate validation, or rolling your own algorithm — can render the strongest encryption useless. This article collects the most common crypto mistakes seen in real systems, explains why each is dangerous, and provides clear guidance on what to do instead. Use it as a checklist before deploying any code that touches encryption, hashing, or secrets.

Why Cryptography Feels Like It's Working — Even When It Isn't

Most parts of software give you feedback.

If something is wrong, it breaks. Tests fail. Errors appear. You debug, you fix, you move on.

Cryptography does not behave that way.

When cryptography is broken, it often looks exactly the same as when it is working correctly. Data still encrypts. Decryption still produces output. Your system keeps running. There is no error, no warning, no sign that anything is wrong.

That is what makes it dangerous.

The mistakes developers make with cryptography are rarely dramatic. They are small decisions — an algorithm chosen out of habit, a security flag disabled to "just get things working," a key stored somewhere convenient. Each one seems reasonable in isolation. Together, they quietly remove the guarantees that cryptography is supposed to provide.

If you step back, those mistakes cluster into a few predictable areas: the algorithms you choose, how you handle keys, how you use libraries, and how your system behaves in the real world. Understanding those patterns is far more useful than memorising a list of rules.

Choosing the Right Building Blocks

It is tempting to think of cryptography as a menu of roughly equivalent options — hashing algorithms, encryption modes, key sizes — where anything that "works" is fine. In practice, that assumption is where many problems begin.

Take hashing. Functions like MD5 and SHA-1 still appear in real codebases, often because they have been there for years and nothing has visibly gone wrong. But both have been broken in ways that matter. Attackers can construct two completely different files that produce the same hash — and that single property is enough to undermine their use in anything security-sensitive, from checking file integrity to signing code.

The mistake here is not usually ignorance. It is inertia. Nobody swapped them out because nothing appeared to break.

The fix is straightforward. Use SHA-256 or SHA-3 for general-purpose hashing. For passwords specifically, use functions that are designed to be slow — bcrypt, Argon2, or PBKDF2. That distinction matters: password hashing is not just about correctness, it is about making brute-force attacks expensive. A fast hash function gives an attacker billions of guesses per second. A slow one turns that into thousands.

A similar pattern shows up with encryption.

AES on its own is not a complete solution. It needs a mode of operation — and some modes are broken in ways that are not immediately obvious. ECB mode, for example, encrypts each block of data independently. It does exactly what it is designed to do, and yet it leaks patterns so clearly that encrypted data can sometimes be visually recognised. A famous demonstration encrypts a penguin image with AES-ECB, and the shape of the penguin remains perfectly visible in the ciphertext.

More subtle is encrypting data without verifying its integrity. Encryption protects confidentiality — it keeps your data unreadable. But without also checking that the data has not been modified in transit, an attacker can alter encrypted messages in controlled ways. The system decrypts without complaint. Nothing crashes. The guarantees you thought you had are quietly gone.

The modern answer is to stop combining these pieces manually. Use authenticated encryption — AES-GCM or ChaCha20-Poly1305 — where encryption and integrity checking are handled together as one well-tested construction. You do not have to assemble the pieces; the mode does it for you.

The same principle applies to asymmetric cryptography. RSA with small keys was once standard and is now inadequate. Older algorithms like RC4 still appear in legacy systems despite being fundamentally broken. None of these failures are loud. They simply reduce the margin of safety until it disappears entirely.

Where Most Systems Actually Break: Key Management

If algorithm choice is the visible surface of cryptography, key management is everything underneath it. This is where most real-world failures happen — not in the maths, but in the handling.

The simplest mistake is putting a secret directly in source code. It feels harmless. It is convenient. It works. But code is copied, shared, backed up, committed to repositories, and sometimes made public by accident. Once a secret enters that system, you no longer fully control who can see it.

Even when keys are not hardcoded, they are often stored next to the data they protect. An encrypted database with its key sitting in a config file on the same server is not meaningfully secure. An attacker who gets into the server gets both at once — it is like locking a safe and taping the combination to the door.

There are subtler failures too.

Randomness is easy to take for granted. Standard library functions like Math.random() in JavaScript or rand() in C produce values that look random enough for testing or generating game levels. But they are predictable, and predictability is exactly what cryptographic systems cannot tolerate. Keys, tokens, and nonces generated with non-cryptographic randomness can often be reconstructed by an attacker who observes enough output. Always use cryptographically secure random number generators — crypto.randomBytes() in Node.js, secrets.token_bytes() in Python, crypto/rand in Go.

Then there is time.

Keys that are never rotated accumulate exposure. They appear in logs, backups, old config files, and developer laptops. Each copy is a potential leak. Over months or years, the probability that a key has been quietly compromised approaches certainty — even if no breach is ever detected. Treat key rotation the way you treat dependency updates: something that should happen on a schedule, not only after something goes wrong.

One mistake deserves particular emphasis because of how catastrophic it can be: reusing nonces or initialisation vectors. In modes like AES-GCM, a nonce is a number used once per encryption operation with a given key. Reusing it even once under the same key can be enough to break the entire system — not weaken it, but break it. The attacker can recover the authentication key and potentially decrypt everything encrypted with it. Always generate nonces randomly and never reuse them.

When Good Tools Are Used the Wrong Way

Even with the right algorithms and careful key handling, systems still fail because of how cryptographic libraries are used.

The clearest example is implementing custom cryptography. It rarely starts with ambition. It begins with small adjustments — combining standard primitives slightly differently, tweaking behaviour for a specific case, building something "just for this situation." Cryptography is not forgiving of this. The difference between a secure implementation and a broken one is often invisible without deep analysis, and every custom cryptographic construction that has ever been publicly examined has eventually been broken.

The standard advice exists for a reason: do not design your own cryptography.

More common than custom algorithms is disabling security checks. Certificate verification in TLS is a perfect example. During development it is often turned off to avoid dealing with certificate configuration. The system starts working. The flag stays. When the application reaches production, it now accepts any certificate — including one presented by an attacker sitting between your server and its client.

From the outside, everything still looks secure. The connection is encrypted. Data flows. But the trust that TLS is supposed to establish has been removed. The encryption is real; the security is not.

There are other edges worth knowing. Standard string comparisons in most languages stop as soon as they find a mismatch, which means comparisons of secrets like HMACs or tokens can leak timing information. An attacker with precise measurements can sometimes use that signal to guess secrets one character at a time. Use constant-time comparison functions instead — hmac.compare_digest() in Python, hmac.Equal() in Go.

RSA without proper padding is another quiet failure. Textbook RSA — with no padding at all — is deterministic and vulnerable to chosen-plaintext attacks. Even PKCS#1 v1.5 padding has well-documented weaknesses. Use OAEP for RSA encryption and PSS for RSA signatures.

Each of these mistakes is small. Each is easy to miss. And each creates an opening that would not exist if the library defaults had simply been left alone.

Passwords: Familiar, but Frequently Mishandled

Passwords feel simple, which is probably why they are so often handled incorrectly.

Storing them in plain text is the obvious failure — and it still happens. More commonly, they are hashed with a fast algorithm like SHA-256. That sounds reasonable until you consider that attackers can compute billions of SHA-256 hashes per second on consumer hardware. A database of "hashed" passwords becomes crackable in hours.

The solution is not just hashing. It is slow hashing — deliberately expensive functions like bcrypt or Argon2 that make each individual check take a small but meaningful amount of time. For a real user logging in, that cost is imperceptible. For an attacker trying billions of guesses offline, it becomes the difference between cracking a database overnight and cracking it never.

Beyond the choice of algorithm, two practices are essential.

The first is salting: adding a unique random value to each password before hashing. Without it, two users with the same password produce the same hash, which lets an attacker recognise common passwords at a glance and use precomputed lookup tables. With a salt, every hash is unique even if the underlying passwords are identical.

The second is peppering: adding a secret value that is stored outside the database entirely. Even if an attacker steals the database, they cannot crack the hashes without also knowing the pepper. It adds meaningful protection against database-only breaches, at the cost of some operational complexity. Modern password libraries like bcrypt and Argon2 handle salting automatically — salting should be considered non-negotiable, peppering a worthwhile addition for higher-stakes applications.

The Operational Reality

Even a correctly implemented cryptographic system can fail in practice — not because the algorithms are wrong, but because of how the system is run.

Secrets appear in logs because someone added debug logging during a late-night incident and never went back to remove it. Credentials get committed to version control because it was the fastest way to get something running. Production keys end up in development environments because it simplifies setup.

Each decision makes sense in the moment. Together, they create a system where secrets are widely distributed, loosely controlled, and difficult to revoke.

When a breach happens — and breaches do happen — failing to rotate secrets means the attacker retains access long after the original vulnerability has been patched. Rotating secrets is not just good hygiene. It is what actually closes the door.

Some practical habits go a long way. Use .gitignore to exclude secret files, and add automated scanning to catch anything that slips through. Never log request bodies, tokens, or API keys — strip sensitive fields before they reach your logging pipeline. Use separate credentials for development, staging, and production. When something goes wrong, rotate everything that could have been exposed, not just the obvious pieces.

What Actually Matters

There is a single pattern running through all of these mistakes.

Cryptography fails when it is treated like ordinary code.

It is not ordinary code. The failure modes are invisible. The consequences are often irreversible. A bug in a sorting algorithm produces wrong output. A bug in a cryptographic implementation can expose every user's data, silently, for years before anyone notices.

The safest path is not to be clever. It is to be conservative.

Use algorithms that are widely accepted and well understood. Use libraries that handle complexity for you rather than building your own. Avoid modifying behaviour unless you fully understand the implications. Treat keys and secrets as the most sensitive data in your system — because they are.

You do not need to understand the mathematics behind AES or elliptic curves to use them correctly. You do not need a deep background in number theory to store passwords safely. But you do need to respect the boundaries these tools impose, and resist the temptation to work around them when they feel inconvenient.

The Standard Lock

You can build your own lock.

You can make it intricate, surprising, even elegant. It will reflect your ingenuity. It will be unlike anything a thief has seen before.

But it will not have been tested the way real locks are tested — with time, with pressure, with adversaries who do nothing but look for weaknesses.

The standard lock is unremarkable. It is not unique. It does not reflect your creativity.

It simply works.

Cryptography rewards that choice. Use the standard lock.

About N Sharma

Lead Architect at StackAndSystemN Sharma is a technologist with over 28 years of experience in software engineering, system architecture, and technology consulting. He holds a Bachelor’s degree in Engineering, a DBF, and an MBA. His work focuses on research-driven technology education—explaining software architecture, system design, and development practices through structured tutorials designed to help engineers build reliable, scalable systems.

Disclaimer

This article is for educational purposes only. Assistance from AI-powered generative tools was taken to format and improve language flow. While we strive for accuracy, this content may contain errors or omissions and should be independently verified.